Privacy is not disappearing in the age of AI. It is becoming more expensive, more technical, and more unevenly distributed.

That matters because the public conversation around AI privacy is often too simple. One camp assumes AI makes privacy obsolete: models need data, so data will inevitably be harvested, aggregated, and analyzed. The other camp imagines that stronger laws or better settings will restore a world where personal information stays neatly compartmentalized. Neither view matches the way the market is actually evolving.

The real story is messier. AI raises the value of data while also raising the cost of protecting it. That changes corporate behavior, regulatory pressure, and the daily experience of ordinary users. The winners will be the companies and institutions that can turn privacy into a product feature, a compliance advantage, or a trust signal. The losers will be consumers and smaller organizations that lack leverage, budget, or technical maturity.

Privacy is becoming a cost center with strategic value

In traditional software, privacy was often treated as a legal checkbox: publish a policy, manage consent, and minimize liability. AI systems turn that model inside out. Training data, inference logs, user prompts, voice samples, images, metadata, and behavioral traces all become raw material. Even if a company does not explicitly “sell” personal data, it may still use it to improve models, personalize services, detect fraud, or generate predictions that are commercially valuable.

This is why privacy is now a line item in infrastructure planning. Companies need data governance pipelines, retention rules, access controls, redaction tools, encryption, audit logs, and increasingly, model-level safeguards that prevent accidental memorization or leakage. None of that is cheap. For large platforms, the expense is manageable and even defensible if it reduces regulatory risk or strengthens customer trust. For smaller firms, privacy engineering can be a serious burden.

That economic asymmetry will shape the market. AI is likely to concentrate power not only because of compute and data scale, but because privacy compliance itself is easier to afford at scale. Large cloud providers, enterprise software vendors, and hyperscale AI firms can spread the cost across many products. Startups and mid-sized companies often cannot.

Regulation will define the floor, not the finish line

Policy is moving, but it is moving unevenly. The European Union has already pushed harder than most markets on data protection, and new AI rules are adding layers of documentation, transparency, and risk controls. In the United States, privacy policy remains fragmented: state laws, sector-specific rules, consumer protection actions, and a growing number of AI-focused proposals all coexist without a single national framework.

That fragmentation creates a practical reality: companies will not meet one universal privacy standard. They will build for the strictest jurisdiction they cannot afford to ignore, then localize from there. For global platforms, that means more sophisticated compliance operations and more granular controls around data residency, retention, and model training. For everyone else, it means uncertainty and defensive overcorrection.

Still, regulation matters because it sets the floor. It can force disclosure, limit certain kinds of automated decision-making, and impose consequences for careless data handling. It can also slow the most aggressive forms of surveillance capitalism by making the collection of data more visible and more contestable.

But regulation alone will not solve the problem. Laws can restrict what firms are allowed to do, yet they cannot erase the incentives that make data collection so attractive. AI systems improve when they can see patterns across users, contexts, and time. That creates enduring pressure to expand data access. The policy question is not whether that pressure exists. It is where society wants to draw the line.

The privacy market will split into premium and default tiers

One of the most important changes AI will bring is a tiered privacy economy. For many consumers, privacy will remain a default but limited service: acceptable protections, broad consent language, and a tradeoff between convenience and control. For those willing to pay, a more robust privacy layer will emerge through premium subscriptions, enterprise tools, encrypted devices, privacy-preserving assistants, and services that promise not to train on user data.

This is already visible in consumer tech and enterprise AI. Some services now offer opt-outs from model training, shorter data retention windows, or on-device processing. In enterprise settings, customers increasingly demand private deployments, virtual private clouds, dedicated instances, or contractual guarantees about how their data will be handled. In other words, privacy is being unbundled from the base product and sold as an upgrade.

That is economically rational, but socially uneven. If meaningful privacy becomes a premium feature, then wealthier users, larger enterprises, and regulated industries will have stronger protections than everyone else. The market will protect what it can monetize. It will not protect every user equally.

This also changes labor. Privacy professionals, compliance teams, security engineers, and AI governance specialists are becoming essential staff, not back-office overhead. Companies building AI systems need people who can map data flows, verify vendor behavior, interpret regulation, and operationalize controls inside product development. The demand is real, and so is the talent shortage. As with cybersecurity, the labor market may lag behind the urgency.

AI makes surveillance cheaper, but not automatically omnipotent

There is a temptation to think AI creates a total surveillance machine. In practice, the reality is more constrained. Yes, AI makes it easier to classify images, transcribe speech, infer intent, identify anomalies, and connect data points that used to live in separate systems. That lowers the cost of monitoring across workplaces, retail environments, public spaces, and digital platforms.

But surveillance is still bounded by budgets, technical error, legal risk, and social resistance. Models can hallucinate, misclassify, or overfit. Data pipelines can be poisoned or incomplete. Companies that overcollect also increase their exposure to breach risk, litigation, and reputational damage. In other words, the same systems that make tracking easier also make data misuse more visible and more punishable.

This is where policy and market forces intersect. A regulator may not be able to stop every invasive use case, but it can increase the cost of abuse. A consumer may not read a privacy policy, but they can abandon a product after a public scandal. A buyer may not fully trust an AI vendor, but they can demand contractual protections and audits. Privacy in an AI world will be shaped less by abstract ideals than by friction: the friction of compliance, the friction of switching costs, and the friction of public backlash.

The most durable privacy tools will be technical, not rhetorical

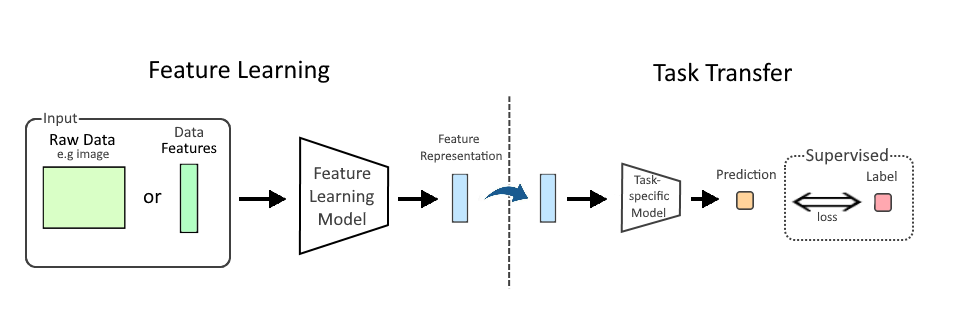

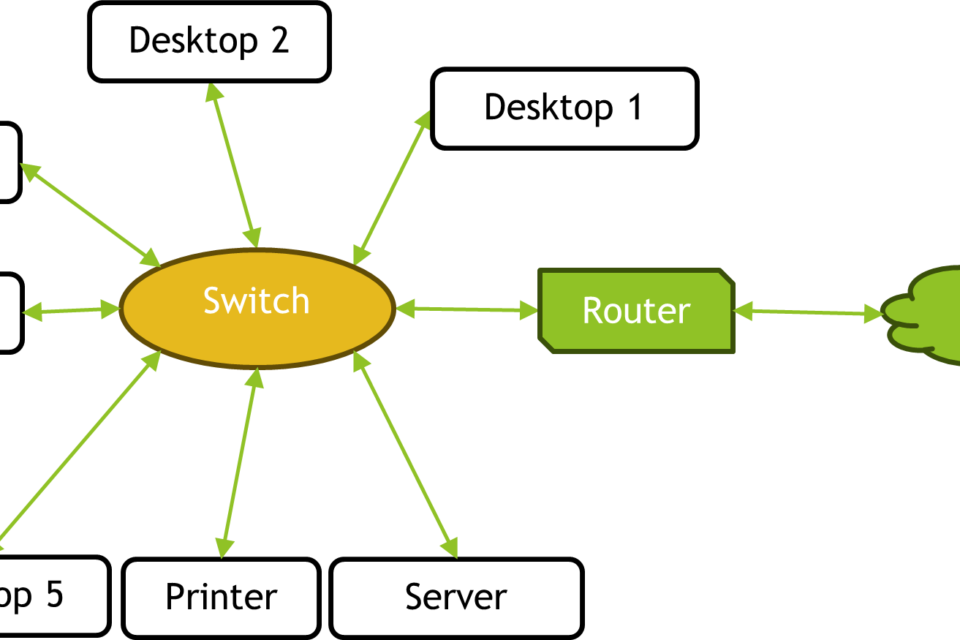

If privacy is to survive in an AI economy, it will need better engineering. The most credible approaches are not slogans about “responsible AI” but concrete systems that reduce the exposure of raw personal data in the first place. That includes data minimization, on-device processing, differential privacy, federated learning, secure enclaves, encryption at rest and in transit, and strong access control around training and inference pipelines.

Not every tool fits every use case. Federated learning is not a universal replacement for centralized training. Differential privacy can reduce utility if applied poorly. On-device AI can improve confidentiality but depends on device capability and battery tradeoffs. Secure enclaves can help protect sensitive processing, but they are not magic shields. The point is not that any one method solves privacy. It is that privacy is most defensible when it is designed into the system architecture rather than bolted on afterward.

That reality creates a competitive advantage for firms that invest early. If privacy-preserving infrastructure becomes a requirement rather than a feature, then companies with modern data governance, better model tooling, and stronger security practices will move faster under regulation, not slower.

What the future likely looks like

The future of privacy in an AI world is not a binary choice between surveillance and freedom. It is a negotiation among laws, product design, market power, and user expectations. Some forms of privacy will improve: better transparency, tighter controls, stronger enterprise contracts, and more local processing. Other forms will erode: passive data collection, invisible profiling, and the assumption that personal information stays confined to one service or one context.

The biggest risk is not that privacy disappears overnight. It is that society accepts a slowly degrading baseline because convenience is immediate and the harms are diffuse. AI systems make that danger especially acute because they can extract value from information that once seemed harmless in isolation. A location trace, a message pattern, a voice sample, a browsing session, a workplace interaction: each may appear trivial. Together, they can paint a remarkably detailed picture of a person’s life.

The best-case outcome is not a return to a pre-digital past. It is a more disciplined digital economy where data collection is narrower, controls are stronger, and the costs of exploitation are high enough to change behavior. That will require policy, yes, but also investment in technical safeguards and a willingness by buyers to pay for privacy rather than assume it is free.

Privacy in the AI era will survive where institutions make it worth the cost. The question is whether that cost gets paid by platforms, regulators, employers, and governments—or mostly by individuals, one hidden tradeoff at a time.

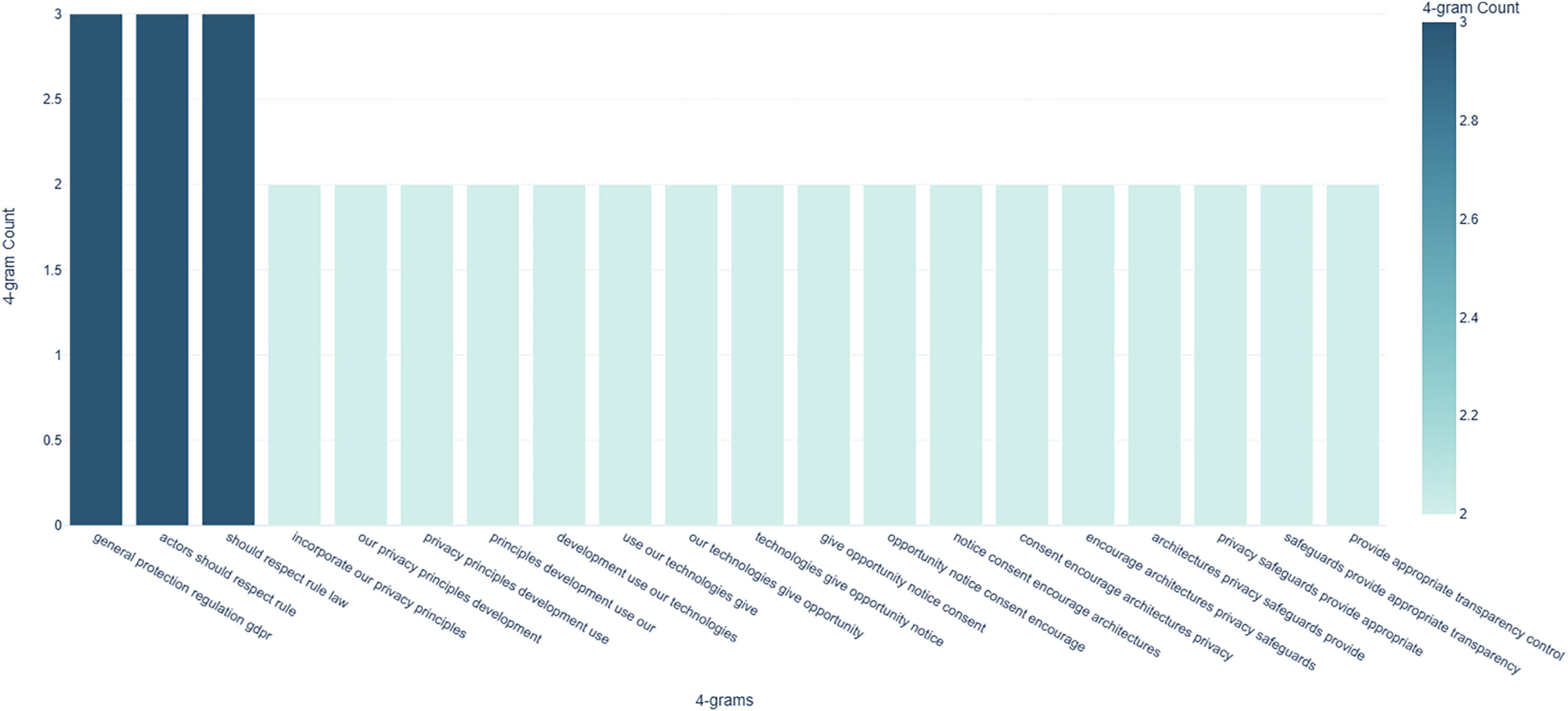

Image: N-gram analysis results – top 20 recurrent 4-g for the 137 descriptions of "privacy" in AI governance guidelines.jpg | https://www.cell.com/patterns/fulltext/S2666-3899(23)00241-6 | License: CC BY 4.0 | Source: Wikimedia | https://commons.wikimedia.org/wiki/File:N-gram_analysis_results_%E2%80%93_top_20_recurrent_4-g_for_the_137_descriptions_of_%22privacy%22_in_AI_governance_guidelines.jpg