Privacy Is No Longer a Binary Choice

For most of the internet era, privacy was framed as a simple trade: give up some data in exchange for useful services. AI has made that bargain more complex. Modern systems do not merely store information; they infer, predict, and recombine it at scale. A search history becomes a profile. A profile becomes a risk score. A risk score becomes a decision that can affect pricing, access, hiring, insurance, content exposure, or even whether a human reviews your case at all.

This matters because AI changes the economics of data collection. When computation was expensive and model performance limited, companies only had a weak incentive to hoard everything. Now, large models and cheap inference make more data useful for longer. The result is not just more surveillance in the obvious sense. It is a broader environment in which ordinary behavior can be converted into machine-readable signals and monetized or acted upon in ways users may never see.

The future of privacy in an AI world will not be decided by a single breakthrough or regulation. It will be shaped by a sustained tug-of-war between product convenience, corporate incentives, public backlash, and the technical limits of keeping data both useful and controlled.

The Market Incentive Is to Collect First, Restrict Later

From a company’s point of view, more data often means better models, better personalization, lower fraud, and stronger retention. AI pushes that logic further. If a model can summarize your messages, predict what you need next, or automate your work, then it needs access to deeper layers of context: emails, documents, calendars, voice, location, purchase history, and behavior across devices.

That creates a structural privacy problem. The value of AI often depends on reducing friction, and the easiest way to reduce friction is to centralize data. Cloud-scale AI systems are built on vast storage, telemetry, logging, and continuous feedback loops. Even when firms promise to “anonymize” data, modern inference can re-identify people from combinations of supposedly harmless details. The more powerful the model, the more it can learn from patterns that are not obviously personal on their own.

There is also a competitive dimension. In many markets, the company with the most user data has a real advantage, which can entrench large platforms and make it harder for smaller firms to compete. That is not just a privacy issue; it is a market structure issue. If the path to a better AI product requires more access to intimate data, privacy becomes a barrier to entry unless regulators or technical standards create a fairer baseline.

What Regulation Can Do, and What It Cannot

Policy is not powerless, but it has hard limits. The strongest privacy laws can force transparency, consent, retention limits, purpose restrictions, and user access rights. They can also create penalties for misuse and give regulators a way to audit high-risk systems. In practice, that means better rules around training data, stronger controls over sensitive categories such as biometrics and health information, and clearer obligations when AI systems make consequential decisions.

But regulation cannot magically make complex systems simple. The challenge with AI is that data can be embedded in weights, prompts, logs, embeddings, and downstream outputs. Once information has been used to train a model, removing it completely is technically difficult and sometimes only partially possible. “Delete my data” is straightforward in a database; it is much harder in a model that has already absorbed statistical traces from millions of records.

That makes enforcement more important than broad slogans. Policymakers will need to focus on the points where control is still feasible: collection, retention, access, training provenance, and output monitoring. They will also need to distinguish between low-risk consumer personalization and high-stakes uses such as employment screening, policing, credit, housing, education, and healthcare. A privacy framework that treats all AI use cases the same is unlikely to work.

There is another complication: rules that are too strict in one jurisdiction can push development elsewhere without improving real-world privacy. The goal should not be to strangle useful AI applications, but to make data handling legible, contestable, and proportionate to the risk. That is a harder political task than simply demanding “more privacy,” but it is the one that matters.

The Technical Answer Is Real, But Incomplete

The technology industry is not standing still. Privacy-preserving techniques are improving, including differential privacy, secure enclaves, federated learning, data minimization, synthetic data, and on-device inference. These approaches can reduce exposure by keeping more data local or limiting what a model can learn about any one person.

Still, none of these methods is a universal solution. Differential privacy, for example, can protect individuals while preserving aggregate utility, but it introduces a tradeoff: too much noise and the model degrades; too little and the protection weakens. Federated learning keeps data on the device, but it still requires coordination, model updates, and careful protections against leakage from gradients or metadata. On-device AI can improve privacy by avoiding cloud transfer, yet it depends on powerful edge hardware and often cannot match the scale or flexibility of centralized systems.

That hardware constraint is important. Privacy is not just a legal or ethical question; it is a compute architecture question. If more AI runs locally on phones, laptops, vehicles, or industrial devices, then the privacy profile changes. If everything routes to large data centers, the opposite happens. The trajectory of GPUs, NPUs, and edge accelerators will therefore shape the privacy landscape as much as regulation will.

In other words, the industry’s privacy story will be determined by system design choices: what gets processed at the edge, what gets sent to the cloud, what is logged, what is deleted, and what is retained for model improvement. Those are engineering decisions, but they are also governance decisions.

Consumers Will Want Convenience Until Trust Breaks

Public opinion on privacy is often inconsistent because people are not just privacy absolutists or pragmatists. They make tradeoffs. Most users will tolerate a lot of data collection if the service is clearly useful, if the cost is low, and if the company appears trustworthy. That is why many privacy concerns remain abstract until there is a visible breach, a manipulative recommendation system, or a decision that feels unfair.

AI changes this dynamic by making the invisible more powerful. A system that predicts what you are likely to buy is one thing. A system that predicts your vulnerabilities, stress patterns, or willingness to click is another. When users realize that private data is not merely being stored but actively interpreted, consent becomes less meaningful unless it is informed, specific, and easy to withdraw.

That is where the market may eventually correct itself. Companies that mishandle data or use AI in ways people experience as invasive can destroy trust quickly. But markets are slow to punish diffuse harms. A privacy failure is often felt unevenly: the consumer absorbs the inconvenience, while the company internalizes only part of the reputational damage. That gap is why public policy still matters.

What a Durable Privacy Framework Looks Like

The future is unlikely to be defined by absolute privacy or total exposure. It will be defined by managed compromise. A workable framework would include several elements: data minimization by default, explicit limits on secondary use, strong protections for sensitive data, clear audit trails for model training, and real user rights to access, correct, export, and delete where technically possible.

It would also require a more honest conversation about asymmetry. Individuals cannot negotiate data terms one app at a time against every major platform and model provider. That is why privacy has to be treated as a system-level issue, not just a settings menu. Standards, procurement rules, sector-specific regulation, and competitive pressure all have a role to play.

Just as important, society will have to decide which AI uses are worth the privacy cost. A medical system that helps detect disease earlier may justify different data handling than an ad platform optimizing engagement or a workplace monitoring tool scoring employee behavior. The point is not to ban all data-intensive AI. It is to demand a tighter connection between the benefit being promised and the privacy being surrendered.

The Real Tradeoff Is Power

At bottom, the future of privacy in an AI world is not only about personal dignity, though that matters. It is about power: who sees what, who can infer what, who can act on it, and who has the right to say no. AI makes those questions more urgent because it turns raw data into predictive power at industrial scale.

The optimistic case is that better laws, better hardware, and better design will make AI more private than the first generation of cloud-centered systems. The harder truth is that every gain will require effort. Privacy will not survive as a default condition of digital life. It will have to be engineered, enforced, and defended.

That is the downstream consequence of the AI era: privacy is becoming less like a background expectation and more like a strategic choice. The societies that get this right will not eliminate data collection. They will constrain it well enough that people still trust the systems built on top of it.

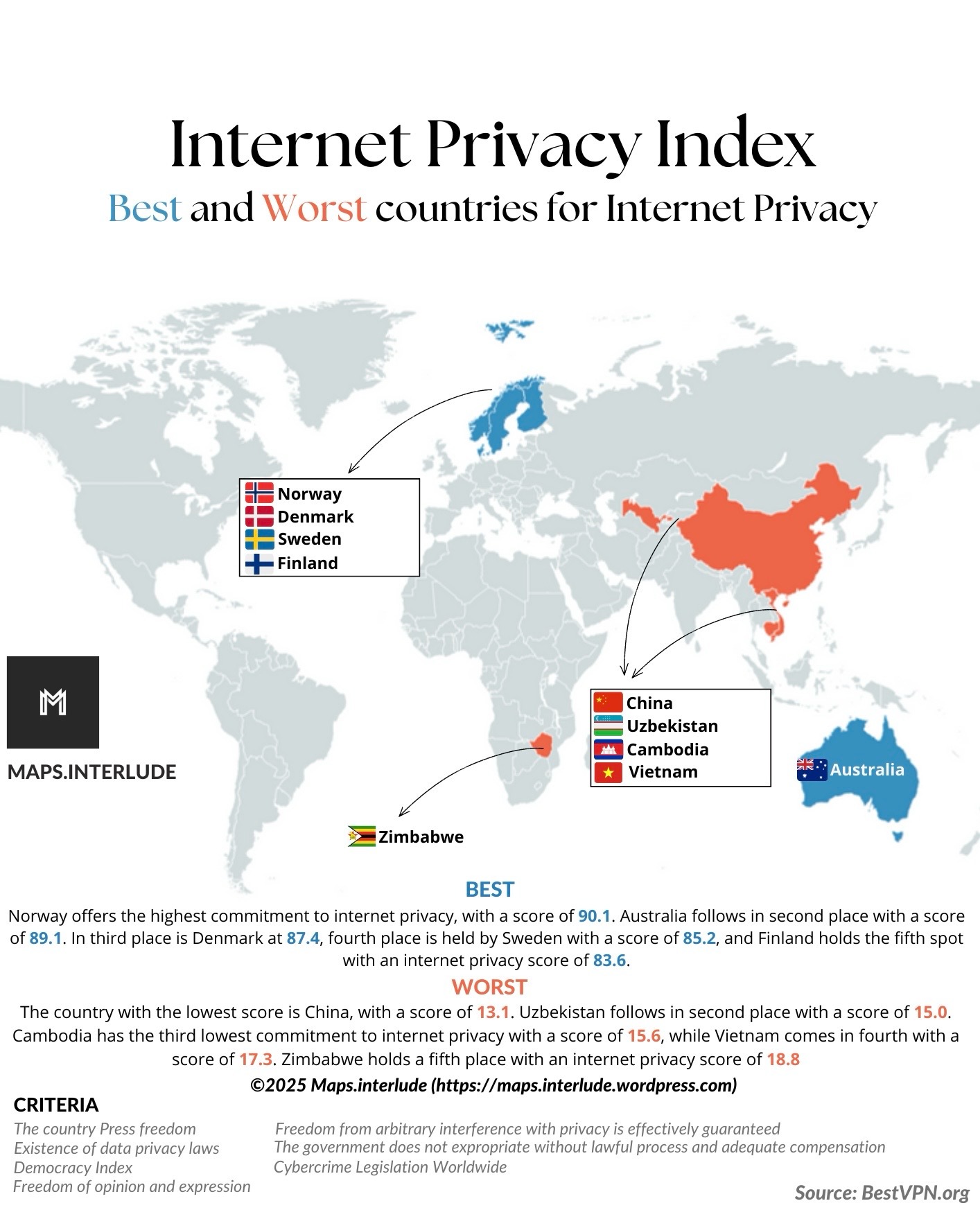

Image: Internet privacy Index.jpg | Own work | License: CC BY 4.0 | Source: Wikimedia | https://commons.wikimedia.org/wiki/File:Internet_privacy_Index.jpg