Generative AI is not just another software feature

Generative AI is everywhere because it solves a problem that has frustrated computer science for decades: how to make software produce useful new output instead of only following fixed rules. Instead of simply classifying an image, routing a request, or retrieving a file, generative models can draft text, write code, create images, summarize documents, generate audio, and increasingly help with planning and decision-making. That capability has made them one of the most visible shifts in technology in years.

But the reason generative AI matters is not just what it can produce. It is the infrastructure behind it. Training and serving these models requires enormous amounts of compute, memory bandwidth, networking, storage, and power. That means generative AI is not just a software story. It is a systems story that reaches into semiconductors, cloud platforms, data center design, and electricity markets.

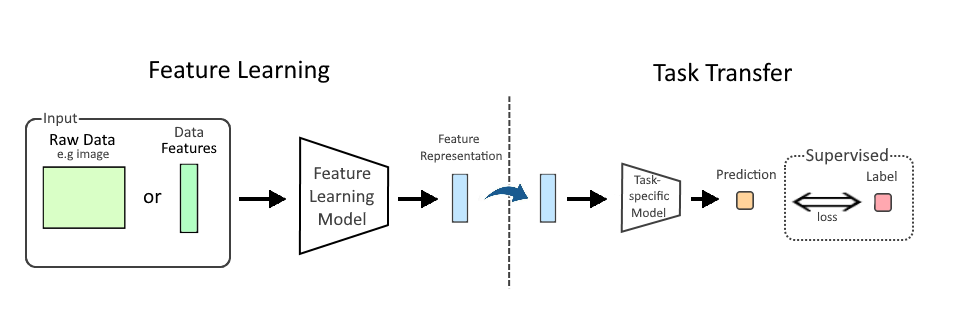

What generative AI actually is

At a basic level, generative AI is a model trained on large datasets to predict and produce new content that resembles patterns in the training data. The most common systems today are large language models, which predict the next token—roughly a word or word fragment—based on context. Image models work in a similar spirit, learning patterns that allow them to synthesize visual output from prompts. Other variants generate code, audio, video, molecular structures, and design candidates.

The key distinction is this: traditional machine learning often recognizes or ranks. Generative AI creates. That creative ability is probabilistic, not magical. The model does not “know” facts in the human sense. It computes likely continuations from patterns learned during training. When it works well, the result feels intelligent because it can generalize across domains, recombine ideas, and handle ambiguous requests in natural language.

That flexibility is why generative AI spread so quickly. A single interface—a chat box, prompt field, or embedded assistant—can sit on top of many tasks. It can summarize a meeting, draft a marketing email, help a developer debug code, or assist a support agent with responses. For businesses, that lowers the barrier to adoption. For consumers, it makes the technology feel immediate.

Why it exploded now

Generative AI did not appear out of nowhere. Several trends converged at once.

First, model architectures improved. Transformer-based systems, introduced in 2017, proved unusually effective at handling language and other sequential data. Second, training data became plentiful enough to support large-scale pretraining across text, code, images, and multimodal datasets. Third, the hardware matured. Modern GPUs, especially those optimized for matrix math, made it practical to train and run large models at scale. Fourth, cloud distribution put these systems within reach of companies that could not build their own supercomputing stacks.

Then came the product layer. Chat interfaces made advanced AI accessible to non-specialists. Instead of requiring a data scientist or custom workflow, users could ask for output in plain English. That simple user experience turned a technical breakthrough into a mass-market phenomenon.

The real engine: compute, memory, and power

Generative AI is often discussed as if it were mainly about software and prompts. In practice, it is constrained by physical infrastructure.

Training a frontier model can require thousands of accelerators working together for weeks or months. These chips must move data quickly between memory and compute units, which is why memory bandwidth and interconnects matter so much. Systems like NVIDIA’s H100 and newer architectures exist not just because they are fast, but because they are built for the particular arithmetic patterns that modern AI uses.

Inference—the process of serving a trained model to users—is equally important. A consumer chatbot or enterprise assistant may seem lightweight from the user side, but behind the scenes every response consumes tokens, GPU cycles, memory, and network capacity. As usage scales, inference can become a large operational cost. That is one reason why companies are so focused on model efficiency, quantization, caching, and specialized inference hardware.

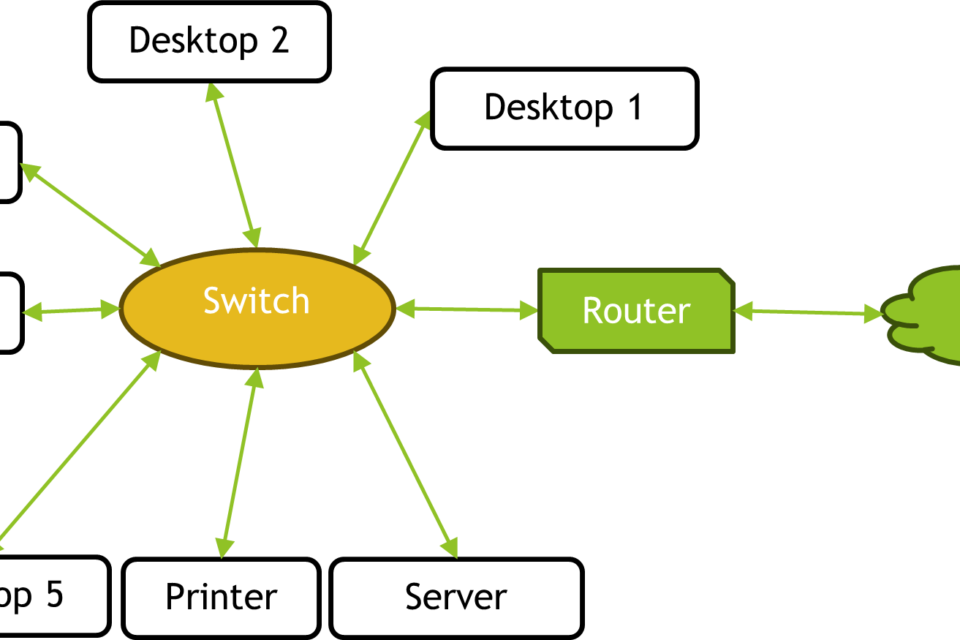

This is why data centers are changing. AI workloads favor high-power racks, advanced cooling, dense networking, and supply chains that can support larger electrical loads. The bottleneck is no longer just server count. It is whether a site can secure enough power, transformers, cooling infrastructure, and grid access to support AI-scale operations.

Why every company suddenly wants it

Generative AI looks like a universal layer because it can sit on top of existing workflows rather than replacing them outright. That makes it attractive to software vendors, cloud providers, retailers, banks, industrial firms, and media companies alike.

For software companies, it offers a new interface and a new pricing model. For enterprises, it promises productivity gains in document-heavy work, customer support, analytics, and internal knowledge search. For chipmakers and cloud platforms, it creates demand for premium infrastructure. For automation vendors and robotics teams, it can provide better planning, perception, and human-machine interaction.

In other words, generative AI is useful not because it does one thing better than all other systems, but because it can act as a general-purpose layer across many domains. That versatility is what makes it strategically important.

Where the limits still are

For all the excitement, generative AI is not a solved problem. The systems can hallucinate, meaning they produce plausible but incorrect output. They can reflect biases in training data. They can be sensitive to prompt phrasing. They can struggle with long-context reasoning, exact recall, and tasks that require reliable factual grounding.

That creates a practical reality for deployment: generative AI works best when it is bounded, supervised, and connected to trusted data sources. In enterprise settings, that often means retrieval-augmented generation, workflow approvals, logging, human review, and domain-specific fine-tuning. The strongest uses are not “replace the worker,” but “compress the time it takes for a worker to get to a first draft, a starting point, or a decision path.”

There is also a cost issue. The same scale that makes these models impressive also makes them expensive to train and serve. As a result, the economics of generative AI depend heavily on utilization rates, model size, latency targets, and whether a company can reduce inference cost per useful output.

Why it matters beyond the chat box

The broader significance of generative AI is that it is reshaping how computing gets built and bought. The technology is changing procurement decisions for GPUs, HBM memory, networking gear, and power equipment. It is accelerating investment in hyperscale data centers. It is influencing semiconductor roadmaps. It is pushing utilities and regulators to pay more attention to data center load growth. And it is changing expectations for what software should do by default.

That is why generative AI is everywhere. It is not just a popular application. It is the visible edge of a much larger industrial shift. Every new model raises questions about chip supply, cloud capacity, latency, safety, and power. Every product release nudges companies to rethink workflows. Every deployment reveals the gap between demo and durable operations.

In that sense, generative AI is less a single technology than a new operating layer for digital systems. The companies that understand that distinction—software on one side, infrastructure on the other—are the ones most likely to make sense of what comes next.

The practical takeaway

If you are trying to understand generative AI without getting lost in the hype, start here: it is a model class that generates new content from learned patterns, and its rise is being driven as much by infrastructure as by product design. The important questions are no longer only “What can it do?” but also “What does it cost to train, serve, and scale?” and “What kind of compute and power does it require?”

That framing matters because generative AI is changing more than interfaces. It is changing the economics of software, the architecture of data centers, and the priorities of the semiconductor industry. That is why it is everywhere—and why it will keep showing up in places far beyond the chat window.

Image: Demonstration of DreamBooth AI model fine-tuning for Stable Diffusion using Jimmy Wales training data from Wikimedia Commons.png | Own work | License: Public domain | Source: Wikimedia | https://commons.wikimedia.org/wiki/File:Demonstration_of_DreamBooth_AI_model_fine-tuning_for_Stable_Diffusion_using_Jimmy_Wales_training_data_from_Wikimedia_Commons.png