Large language models have become the defining interface of the AI boom. They draft emails, summarize reports, write code, answer questions, and power a fast-growing layer of software products. But the public conversation often treats them like magic boxes: input a prompt, receive an answer, and accept the rest on faith. That framing obscures the real story. LLMs are not mystical. They are highly engineered prediction systems built on top of vast datasets, transformer architectures, and immense compute infrastructure.

If you want to understand where AI is actually going, you need to understand how these models work at a systems level. Not just the algorithm, but the training pipeline, the hardware requirements, the deployment constraints, and the reason they can sound fluent while still being wrong. That is where the useful picture begins.

The core idea: predicting the next token

At the simplest level, a large language model is trained to predict the next token in a sequence. A token is not always a word. It can be part of a word, a whole word, punctuation, or even a symbol. The model reads a string of tokens and assigns probabilities to what should come next.

This may sound modest, but it is the engine behind everything else. During training, the model sees enormous amounts of text and repeatedly learns a single task: given the context so far, what token is most likely to follow? Over time, it becomes very good at capturing grammar, style, facts, patterns, reasoning-like structures, and statistical relationships embedded in language.

The key point is that LLMs do not retrieve answers from a built-in encyclopedia. They generate text by estimating what sequence of tokens is most plausible. That is why they can be impressively coherent and still produce incorrect details with absolute confidence.

Why transformers changed the game

The architecture that made modern LLMs practical is the transformer. Earlier language models struggled to retain useful information across long passages. Transformers solved much of that problem with an attention mechanism that lets the model weigh different parts of the input when producing each new token.

In plain English: when the model is processing a sentence or prompt, attention helps it decide which prior words matter most right now. If you ask about a company, a date, and a product in the same prompt, the model uses attention to connect those pieces instead of treating the text as a flat stream.

This is why transformers scale so well. They can ingest large context windows, model relationships across large spans of text, and train efficiently on modern parallel hardware. That combination made the current wave of AI possible.

Training: where the real cost lives

Training an LLM is where the industry spends most of its money and compute. The process starts with massive datasets assembled from books, websites, code repositories, academic papers, and other text sources. Those data are cleaned, filtered, tokenized, and fed through the model over and over again.

During training, the model adjusts billions—or in leading systems, trillions—of parameters. Parameters are the internal numerical settings that determine how strongly the model responds to different patterns. Think of them as the learned memory of the system, not in the human sense, but as a huge web of statistical associations.

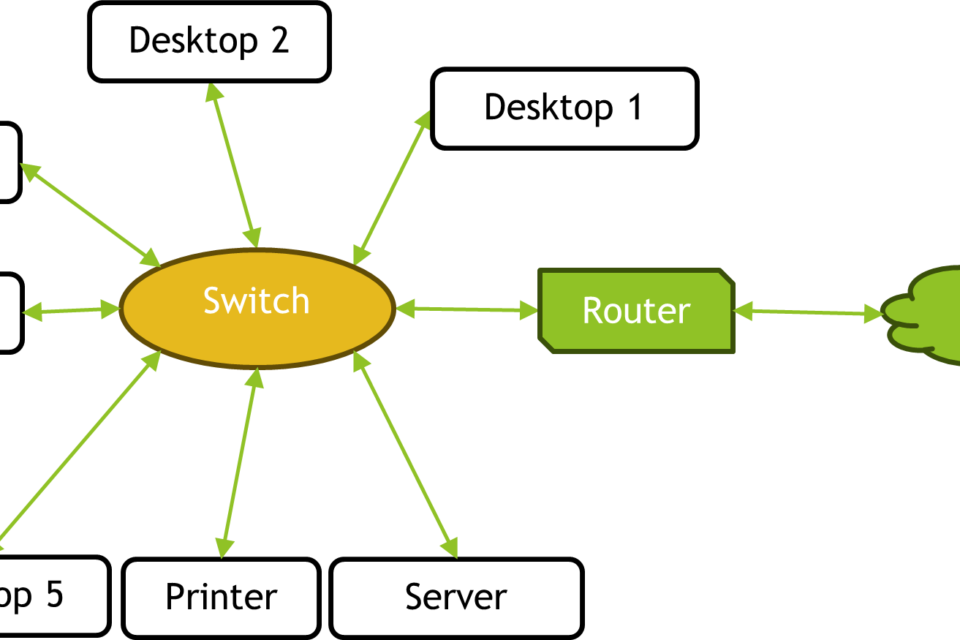

Training runs on clusters of GPUs or other accelerators connected by high-speed networking. This is not just an AI story; it is a data center story. Power delivery, cooling, memory bandwidth, interconnects, and supply chain planning all matter because training is constrained by infrastructure as much as by software design.

That is one reason AI has become so tightly linked to semiconductors and cloud providers. The model may be the visible product, but the enabling asset is the compute stack underneath it.

Inference: the hidden economics of everyday AI

Once a model is trained, it enters inference: the stage where it generates answers for users. Inference is not free. Every response requires the model to process the prompt, maintain internal state, and produce tokens one by one.

This matters because inference economics shape the entire business. A model that is cheap to train but expensive to run can still be difficult to commercialize at scale. That is why companies obsess over latency, throughput, memory use, quantization, batching, and the number of tokens generated per request.

In practice, model performance is inseparable from hardware efficiency. Faster GPUs, larger memory pools, better serving software, and smarter scheduling all help reduce the cost of each answer. The industry’s push toward smaller models, distillation, and specialized inference chips is a direct response to this reality.

Why LLMs sound smart even when they are not reliable

One of the most confusing aspects of LLMs is that they often sound more authoritative than they are accurate. That is not a bug in the conversational interface; it is a consequence of the training objective. The model is optimized to produce likely text, not to verify truth.

That distinction explains hallucinations, a catchall term for outputs that are fluent but false. If the model has seen enough patterns to produce a convincing answer, it may do so even when the underlying fact is missing, ambiguous, or contradictory. In other words, fluency is not the same as knowledge.

This is why retrieval systems, tool use, and grounding matter so much. When a model is connected to search, databases, internal documents, or external tools, it can anchor its responses in real information rather than relying only on probabilistic text generation.

Reasoning, memory, and the limits of context

LLMs can appear to reason because they are good at producing stepwise text that resembles reasoning. But their internal process is not the same as human deliberation. They are pattern models that can imitate forms of logic, derive intermediate steps, and chain together sub-problems, especially when prompts are structured well.

Still, they have limits. The model only “sees” the context window currently available to it. If important information falls outside that window, it may be forgotten. Long conversations, large codebases, and complex multi-document tasks can break down unless the surrounding system manages context carefully.

That is why LLM products are increasingly not just models, but orchestration layers. They combine retrieval, memory, guardrails, tool calling, and workflow logic to make the raw model more useful and more dependable.

What matters most for the next phase of AI

The biggest mistake people make is treating the model as the entire system. In reality, the model is one layer in a stack that also includes data pipelines, training infrastructure, deployment software, monitoring, safety filters, and user-facing product design.

For industry readers, that means the important questions are shifting. Which workloads require frontier-scale models? Which can be handled by smaller, cheaper systems? Where does inference cost dominate? How much can tool use reduce model size without sacrificing utility? Which organizations have the data, distribution, and compute to build defensible systems?

Those questions matter because the LLM market is maturing from a model race into a systems race. The winners will not necessarily be the companies with the largest parameter counts. They will be the ones that can convert model capability into reliable products at acceptable cost, latency, and energy use.

The practical takeaway

Large language models work by learning statistical patterns in language at massive scale, then using those patterns to predict the next token in a sequence. That simple mechanism, powered by transformers and enormous compute, produces tools that can write, summarize, code, and converse with uncanny flexibility.

But the operational reality is more important than the demo. LLMs depend on expensive training runs, fast inference infrastructure, careful product layering, and human oversight. They are powerful because they are scalable systems, not because they think like people.

Once you see them that way, the hype falls away and the actual industry comes into focus: semiconductors, cloud capacity, data centers, model efficiency, and the ongoing effort to turn probabilistic text generation into something commercially and operationally reliable.

Image: Gain induit CPU- GPU- TRI2.JPG | printed screen of my own statistique from http://boincstats.com/stats/boinc_user_graph.php?pr=bo&id=1210 | License: CC BY-SA 4.0 | Source: Wikimedia | https://commons.wikimedia.org/wiki/File:Gain_induit_CPU-_GPU-_TRI2.JPG