AI training is a systems problem, not just a chip problem

People often talk about AI training as if it were simply a matter of buying faster GPUs. In practice, training modern models is a full-stack infrastructure problem. The compute itself matters, but so do memory bandwidth, networking, storage throughput, software efficiency, power delivery, and cooling. Once a model grows beyond a single accelerator or even a single server, the challenge shifts from raw performance to coordination.

That is why AI training consumes so much infrastructure. A large training run is not just one machine doing math faster than a normal computer. It is thousands of chips repeatedly exchanging data, synchronizing updates, and waiting on one another so that the whole model can improve together. Every delay in that chain becomes wasted time, wasted electricity, and wasted capital.

What training actually does to the hardware

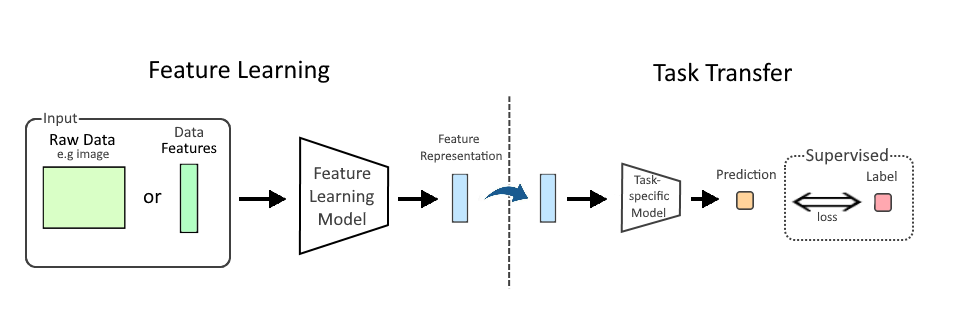

At its core, training a model means processing enormous amounts of data, performing matrix operations, measuring error, and adjusting billions or even trillions of parameters. The math is highly repetitive and highly parallel, which is exactly why GPUs became the center of the market. They can execute many operations at once, and their software stacks are optimized for dense numerical work.

But the headline metric most people hear—flops—only tells part of the story. A chip can have extraordinary theoretical compute and still be underused if it cannot move data quickly enough. Training workloads are often constrained by memory bandwidth, not just arithmetic throughput. The model weights, activations, gradients, and optimizer states all have to move through the system continuously. If the memory subsystem is too slow, the compute units sit idle.

This is one reason high-bandwidth memory has become so important in AI accelerators. HBM sits physically close to the compute die and can deliver much more data per second than conventional memory. That lowers the chance that a GPU starves for input. In training, starvation is expensive: every idle cycle on an expensive accelerator is lost productivity across the entire cluster.

Why one chip is never enough

Earlier generations of machine learning could sometimes be trained on a single GPU or a small box of GPUs. Frontier models are different. The models are too large, the data too abundant, and the time-to-train targets too aggressive. As a result, training is distributed across many accelerators, often in the thousands.

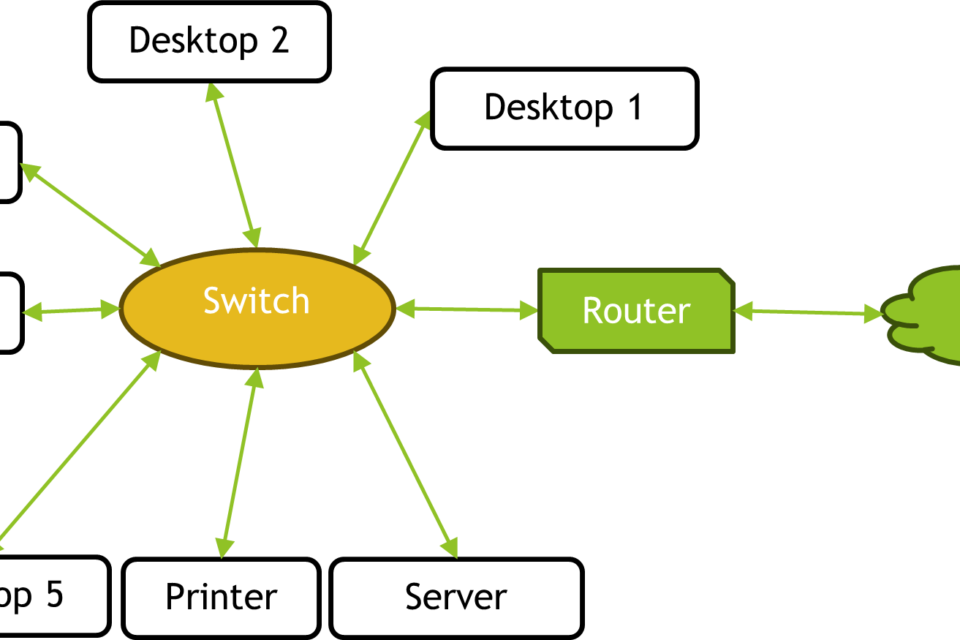

That introduces a new bottleneck: communication. When a model is split across multiple GPUs, those devices must exchange intermediate results constantly. In data parallel training, every worker processes a different slice of data and then shares gradients. In tensor or pipeline parallelism, the model itself is split across devices, which increases the amount of coordination required during each forward and backward pass. Both approaches improve scale, but both also add overhead.

The more you distribute the job, the more time you spend moving data instead of computing. That is the central tradeoff. More chips can mean faster training, but only if the network connecting them is fast enough and the software can keep them synchronized. Otherwise, you have a very expensive cluster spending too much of its time waiting.

The hidden importance of the interconnect

For AI training, the interconnect is often as important as the accelerator itself. Inside a server, high-speed links such as NVLink or comparable chip-to-chip fabrics reduce communication overhead among GPUs. Across servers, Ethernet and InfiniBand-class networking determine whether a large cluster behaves like a coordinated machine or like a group of isolated islands.

When the interconnect is weak, scaling breaks down. Adding more GPUs no longer produces proportional speedup because each device spends more time on synchronization. This is why some training runs benefit from specialized systems built around tightly coupled GPU pods, while others can use more general-purpose clusters with less aggressive networking. The tradeoff is straightforward: specialized infrastructure costs more, but it can deliver much better utilization and lower training time for demanding models.

In comparison-minded terms, the difference looks like this: a cheap cluster can be easier to buy, but a well-balanced cluster is easier to use efficiently. For large training jobs, efficiency usually wins because the cost of idle compute overwhelms the initial hardware savings.

Power and cooling are part of the compute budget

AI training also demands so much power because modern accelerators are power-dense devices. High-end GPUs and AI chips can draw hundreds of watts each, and a dense rack can consume tens of kilowatts or more. Multiply that across many racks and the energy requirement becomes a major operating constraint, not just an accounting detail.

That power has to be delivered reliably, converted efficiently, and removed as heat. Data centers that were built for enterprise workloads may not be suitable for dense AI deployments without serious upgrades. The electrical path from grid connection to rack-level distribution becomes a limiting factor. So does cooling, especially as air cooling runs into practical limits at high rack density.

This is one of the clearest places where AI training infrastructure breaks down. The chip may be available, but the site may not have enough power, enough cooling, or enough space to host it effectively. In other words, the compute is not just purchased from a supplier; it is embedded in a physical environment that must be engineered end to end.

Why memory, storage, and data pipelines matter more than they seem

Training is often described as compute-bound, but the best training systems are data pipelines as much as they are chip clusters. Large models require vast datasets, and those datasets must be cleaned, stored, staged, and fed to the training job at high speed. If data loading is inefficient, the GPUs wait. If the storage subsystem cannot sustain throughput, the training loop stalls.

That is why modern AI infrastructure includes fast local storage, parallel file systems, object storage layers, and elaborate data orchestration. Training jobs are sensitive to small inefficiencies at scale. A minor bottleneck that barely matters on one server can become a major drag across a thousand GPUs.

There is also a software angle here. Frameworks, compilers, kernel fusion, precision formats such as FP16 or BF16, and distributed scheduling all influence how much useful work the hardware performs. Better software can reduce the number of wasted cycles and lower the total compute required for a given training run. But software can only stretch efficiency so far. If the cluster is mismatched to the workload, or the network is weak, no optimization layer can fully compensate.

Comparison across deployment paths: hyperscale, on-prem, and rented cloud

There are three broad ways to access training compute, and each one reflects a different tradeoff.

Hyperscale buildouts offer the best path to large, tightly integrated training runs. These environments can be designed around the needs of AI from the start: high-density racks, custom power architecture, optimized cooling, and fast interconnects. The downside is cost, lead time, and dependence on a limited set of suppliers and sites.

On-prem enterprise deployments give organizations more control over data and scheduling, but they often struggle to match the efficiency of purpose-built AI facilities. They may face constraints in power, networking, or cooling, and they can be hard to scale quickly.

Rented cloud compute lowers the barrier to entry and is excellent for experimentation, smaller training runs, and burst capacity. But for frontier-scale training, cloud economics can become punishing, especially if the workload is long-running and network-intensive. You are paying not just for chips, but for someone else’s margin, facility overhead, and scheduling flexibility.

The right choice depends on scale, urgency, and control requirements. The larger and more synchronized the training job, the more infrastructure matters—and the more attractive dedicated systems become.

Where the model of endless scaling starts to fail

There is a temptation to assume that more compute always means better AI. Sometimes that is true. But the scaling curve is not infinite, and each step up in scale is harder than the last. At some point, the bottleneck shifts from available GPUs to networking, then to power, then to memory, then to data quality, then to economics.

That is where the infrastructure story becomes strategically important. AI training is no longer just a software race. It is a contest over semiconductor supply, advanced packaging, memory availability, grid access, cooling design, and facility buildout. The winners will not simply own the fastest chips; they will be the organizations that can assemble the entire stack and keep it fed.

In plain terms, AI training needs so much compute because the work is enormous, the parallelism is unforgiving, and the supporting infrastructure is part of the machine. The bottlenecks are no longer abstract. They are physical, electrical, and architectural. And as models keep growing, the real question is not whether compute matters. It is whether the surrounding system can keep up.

Image: 1990s home computer office New Orleans.jpg | Own work | License: CC BY-SA 4.0 | Source: Wikimedia | https://commons.wikimedia.org/wiki/File:1990s_home_computer_office_New_Orleans.jpg