From Model Training to Real-World Service

Training a frontier AI model gets the headlines, but deployment is where the economics become real. Once a model leaves the lab, it has to answer requests reliably, quickly, and cheaply, often millions or billions of times. That shift sounds simple on paper. In practice, it turns an algorithm into a full production system built on GPUs, networking, storage, orchestration software, monitoring tools, and operational rules that determine whether the model is useful or merely impressive.

This is the part of AI that business leaders increasingly care about. A model that performs well in a benchmark is not automatically a model that can survive traffic spikes, latency targets, privacy constraints, or budget pressure. At scale, the challenge is not just intelligence. It is throughput, uptime, and cost per inference.

Inference Is the Real Product

Most public attention focuses on model training, where companies assemble massive clusters of GPUs to teach a model patterns from large datasets. But once training is complete, the commercial value usually comes from inference: the act of using the model to generate text, classify images, recommend products, route customer support tickets, detect fraud, or control robots.

Inference has different physics than training. Training can tolerate long jobs and batch processing. Inference is judged by response time, consistency, and efficiency under unpredictable demand. A chatbot serving one user at a time has a different load profile than an enterprise search system handling thousands of concurrent queries or a logistics application making decisions in real time. The infrastructure must be designed for that difference.

That is why the deployment stack increasingly centers on serving systems that can manage queueing, batch requests intelligently, route traffic to the right model version, and keep latency within a narrow window. In many cases, the model itself is only one layer of the product.

The Hardware Layer: GPUs, Memory, and the Cost of Tokens

At scale, the first question is not simply which model to run, but where to run it. GPUs remain the dominant compute engine for modern AI deployment because they offer the parallelism needed for large neural networks. But raw GPU count is only part of the equation. Memory bandwidth, interconnects, and cluster topology can be equally important, especially for large models that do not fit neatly on a single accelerator.

For operators, the key metric is often not benchmark peak performance but cost per token, cost per query, or cost per successful transaction. That pushes teams to optimize aggressively. Smaller or distilled models may be used for common tasks, reserving larger systems for hard cases. Quantization can reduce memory use and improve throughput. Speculative decoding and caching can lower latency. In some deployments, a model is fine-tuned for a narrow domain because a smaller specialized system is simply cheaper to run than a general-purpose one.

This is where the semiconductor story becomes inseparable from the software story. Chip design decisions affect what kinds of model architectures are economically viable. Likewise, deployment demands feed back into chip procurement, cluster planning, and data center expansion. A company deploying AI at scale is not just buying silicon; it is making an infrastructure bet.

Orchestration: Turning Compute into a Service

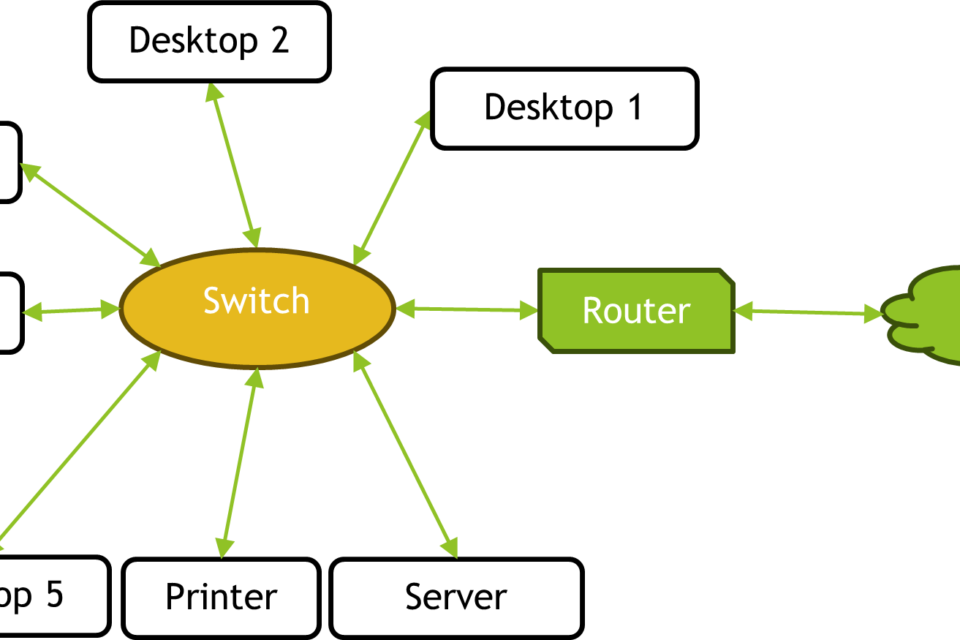

Once hardware is in place, the next challenge is coordination. AI models at scale are rarely deployed as isolated binaries. They are managed by orchestration layers that handle scheduling, autoscaling, failover, versioning, access control, and observability. This is the difference between a promising prototype and a service that can be used by customers every day.

Container systems and distributed computing frameworks are widely used to make model serving elastic. When demand rises, new replicas spin up. When traffic falls, capacity is reduced to save money. Load balancers distribute requests across nodes. Feature stores and vector databases may provide real-time context. API gateways enforce authentication and rate limits. In a mature deployment, no single service does everything; instead, a coordinated chain delivers the answer.

That coordination matters because production AI is brittle in specific ways. A model can drift as user behavior changes. A retrieval system can surface stale documents. A dependency can fail and create cascading latency. Without strong orchestration and monitoring, the visible product may appear to work while quietly degrading in quality.

Data Centers Are Becoming AI Factories

The boom in AI deployment has changed what a data center is for. Traditional enterprise infrastructure was designed around storage, databases, and general-purpose applications. AI-heavy environments are increasingly optimized around dense accelerator clusters, high-speed networking, advanced cooling, and power delivery. In practical terms, the modern AI data center is closer to a factory than a server room.

That shift has immediate business consequences. Power availability can be the limiting factor, not demand. Utilities, regulators, and local governments are now part of AI deployment decisions because a cluster of GPUs can draw enormous electrical load and require upgraded substations or new transmission planning. Cooling is no longer a background facility concern; it affects how much compute can fit in a building and what it costs to operate.

For cloud providers and large enterprises alike, deployment strategy now intersects with energy infrastructure. That includes site selection, water use, grid resilience, and long-term contracts for electricity. The physical reality of AI deployment is one reason the industry is moving from experimentation toward industrial planning.

Reliability, Guardrails, and Human Oversight

Scaling AI is not just a question of making a model faster. It is about making it safer to expose to real users and real workflows. Companies deploy guardrails to reduce harmful outputs, constrain the model’s behavior, and catch obvious failures before they reach customers. These can include content filters, policy engines, schema validation, retrieval constraints, and human review for higher-risk tasks.

In customer service, finance, healthcare, and other regulated sectors, deployment often includes a human-in-the-loop layer. The model may draft a response, score a risk, or summarize information, while a person approves the final action. This is not a sign that the system has failed. It is often the only acceptable way to make AI operational in environments where mistakes carry legal or reputational costs.

Another reason for oversight is calibration. A model that sounds confident can still be wrong. At scale, confidence without controls becomes a liability. Businesses therefore invest not only in model quality but in mechanisms that limit exposure when the model is uncertain or the context is outside its training envelope.

The Economics of Serving: Why Deployment Shapes Strategy

Large-scale deployment changes how companies think about AI strategy. A model is not valuable simply because it exists; it must produce more revenue, better margins, or lower operating costs than it consumes in compute and staffing. That reality is pushing firms toward architectures that are cheaper to serve, easier to monitor, and more tightly matched to business needs.

Some companies build around one general model and offer it broadly. Others use a model router that sends simple tasks to lightweight systems and difficult tasks to more capable ones. Many are choosing retrieval-augmented setups that combine a smaller model with proprietary data, reducing the need to over-scale a single foundation model. In all cases, deployment is becoming a strategic differentiator.

This is also why the market keeps rewarding infrastructure vendors. If AI is moving into the production core of the enterprise, then the winners will not be determined solely by model size. They will also be determined by the layers that make those models dependable, affordable, and compliant.

What Actually Matters Next

The next phase of AI deployment is likely to be defined by efficiency, specialization, and control. Teams will continue to pursue better models, but the bigger gains may come from better serving systems, better chip utilization, lower power consumption, and smarter routing between models. As costs fall and tools mature, AI will spread into more products and workflows, but only if it can be operated like any other critical system.

That is the central truth of deploying AI at scale: the technology stops being interesting only as a model and becomes consequential as infrastructure. The companies that understand that distinction will not just ship AI features. They will build durable businesses around them.

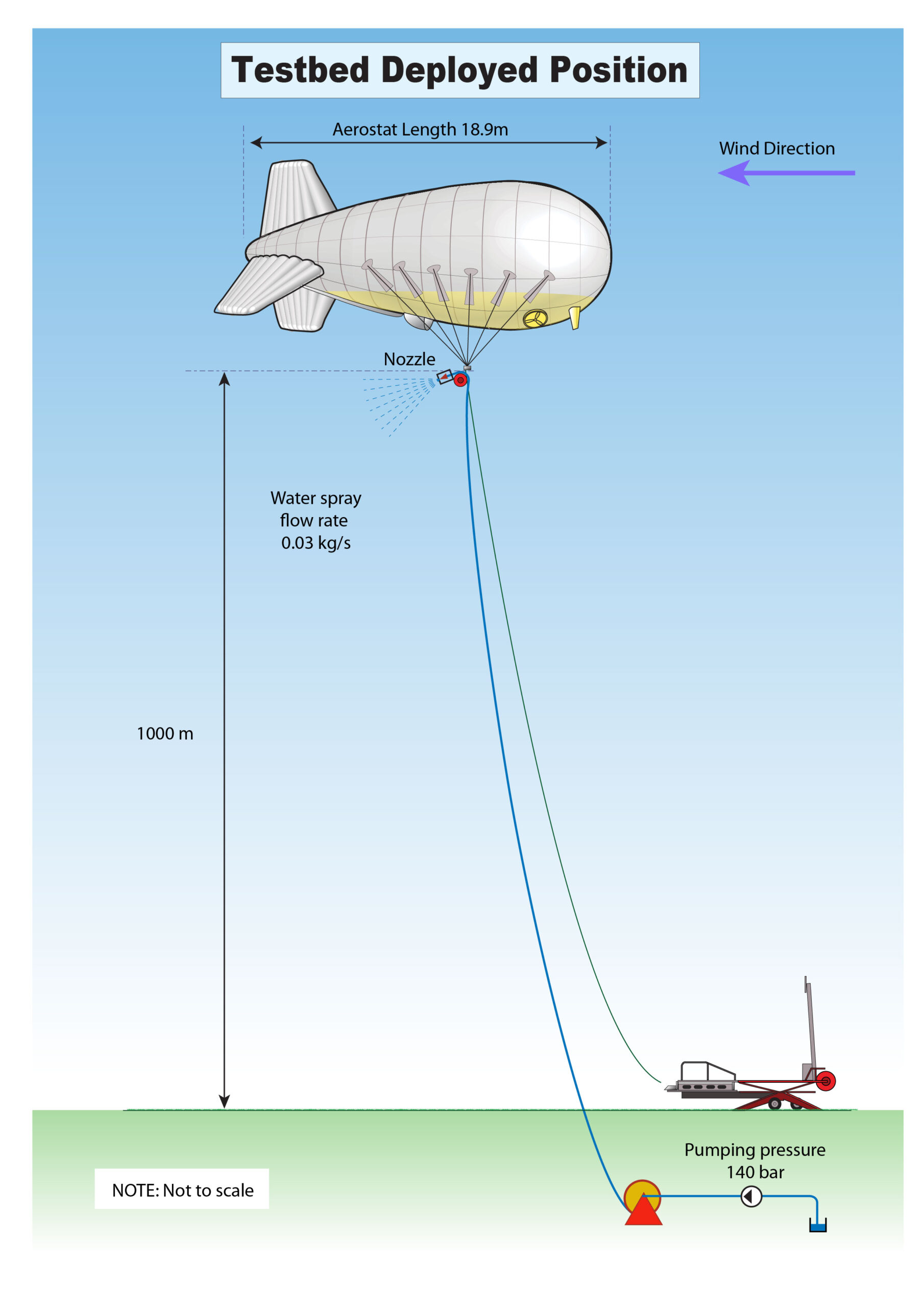

Image: SPICE TESTBED – DEPLOYED POSITION.jpg | Own work – see also Stilgoe J, Watson M, Kuo K (2013) Public Engagement with Biotechnologies Offers Lessons for the Governance of Geoengineering Research and Beyond. PLoS Biol 11(11): e1001707. doi:10.1371/journal.pbio.1001707 | License: CC BY 2.5 | Source: Wikimedia | https://commons.wikimedia.org/wiki/File:SPICE_TESTBED_-_DEPLOYED_POSITION.jpg