GPUs are not “faster CPUs” — they are built for a different kind of math

The simplest way to understand why GPUs have become the default hardware for AI is to stop thinking of them as faster versions of CPUs. They are not. A CPU is designed to be a flexible generalist: it can handle operating systems, databases, control logic, browser tabs, and the unpredictable messiness of real software with low latency and strong single-thread performance. A GPU, by contrast, is designed to do the same type of operation over and over again across thousands of data elements at once.

That distinction matters because modern AI, especially deep learning, is dominated by matrix operations. Training a model means multiplying huge tensors, adjusting weights, and moving large batches of data through layers of computation. In this setting, raw parallelism matters more than the ability to execute a few instructions with ultra-low latency. GPUs thrive here because they contain thousands of smaller compute units that can process many pieces of the same problem simultaneously.

Think of it this way: a CPU is a highly skilled chef who can make many different dishes quickly and adapt on the fly. A GPU is a large line kitchen with dozens of stations all chopping, stirring, and plating the same menu item at once. For AI, the line kitchen usually wins.

The real advantage is throughput, not just peak performance

When people compare GPUs and CPUs, they often focus on headline numbers like FLOPS. But the practical advantage of GPUs in AI is not merely that they can hit a higher peak. It is that they can sustain much higher throughput on workloads that are mathematically regular and highly parallel.

AI training is especially suited to this model. A training run may involve processing billions or trillions of tokens or images, repeatedly executing the same kernels across enormous batches. GPUs can keep their compute lanes busy because the workload is predictable enough to be split into many concurrent tasks. CPUs, with far fewer cores and much less aggregate parallel execution, spend more time doing work sequentially or waiting on memory.

GPUs also benefit from specialized units increasingly tuned for AI, such as tensor cores. These accelerators are built to speed up the exact operations neural networks use most often, especially mixed-precision matrix math. That is one reason modern AI performance is not just about “more chips” but about chips architected for a very specific arithmetic profile.

Memory bandwidth is one of the hidden reasons GPUs dominate

AI compute is not only about arithmetic. It is also about moving data fast enough to keep the arithmetic units fed. This is where GPUs gain another major edge over CPUs: memory bandwidth.

CPUs are optimized for sophisticated control flow and cache hierarchies that work well when a program needs to touch relatively small amounts of data in complex patterns. GPUs, by contrast, are built to stream large amounts of data through the chip quickly. High-bandwidth memory and wide interfaces let GPUs move tensors into and out of compute units at a pace CPUs generally cannot match.

In practice, this means that even if a CPU had enough raw math capability on paper, it would often be starved by memory traffic long before it could keep up with a GPU on AI workloads. Training and inference at scale are frequently bottlenecked not by arithmetic alone, but by how efficiently the system can feed data through memory, caches, and interconnects.

Why CPUs still matter: orchestration, latency, and the “everything else” problem

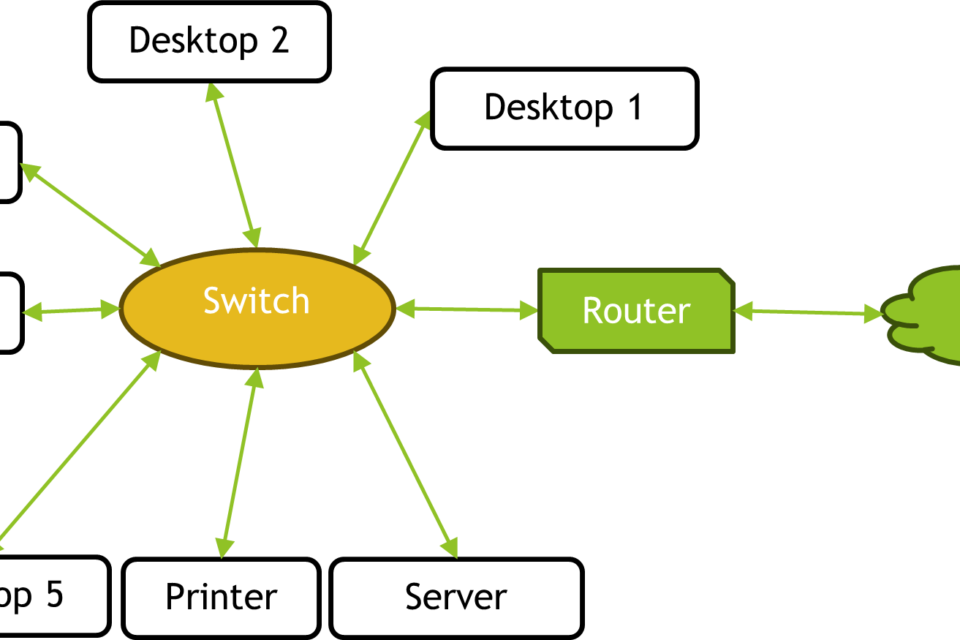

None of this makes CPUs obsolete. In fact, most AI systems rely heavily on them. CPUs still handle orchestration, data preprocessing, networking, storage, scheduling, and the long tail of tasks that are difficult to vectorize or parallelize cleanly. They are the glue that keeps the AI stack functioning.

CPUs also remain better for workloads where each task is different, branching is heavy, or latency matters more than throughput. A recommendation engine serving a highly customized request, a transaction system, or a piece of control software in industrial automation may still be better suited to CPU execution or a mixed CPU-GPU design.

That is why the most realistic deployments are not “GPU instead of CPU,” but “GPU alongside CPU.” The CPU is often the conductor. The GPU is the orchestra.

The infrastructure story: GPUs are only as good as the system around them

The strongest argument for GPUs becomes even more compelling at cluster scale, but that is also where the tradeoffs become more visible. A single GPU can accelerate AI work dramatically. A fleet of GPUs can transform a data center — provided the surrounding infrastructure is strong enough to keep up.

Large-scale AI training depends on fast interconnects between GPUs, high-capacity networking between servers, and storage systems that can deliver data without creating bottlenecks. If one GPU has to wait on another, or if the network fabric is too slow, the theoretical gains from parallel compute collapse into idle time. This is why distributed AI systems lean on technologies such as NVLink, InfiniBand, and increasingly Ethernet-based fabrics tuned for low latency and high throughput.

Power and cooling are the other hard constraints. GPUs are dense compute devices, and dense compute means dense heat. A modern AI rack can draw extraordinary amounts of power, turning the data center into a constrained energy system as much as a computing one. This is not a minor operational issue. It shapes site selection, grid planning, utility relationships, and capex decisions for hyperscalers and enterprises alike.

So while GPUs are better than CPUs for AI in a narrow computational sense, the larger truth is that they demand a more sophisticated infrastructure stack. Without that stack, the performance advantage gets diluted fast.

Where GPUs break down: small models, low budgets, and power-limited deployments

There are also cases where GPUs are not the best answer. For smaller models, lighter inference tasks, or deployments where capital cost and power draw matter more than absolute throughput, CPUs can still be the better engineering choice. If the model is modest and traffic is low, the overhead of provisioning a GPU may not be justified.

Edge deployments are another example. In robotics, factory automation, or remote industrial environments, power budgets and thermal constraints often limit the feasibility of large GPUs. Some of these systems use embedded accelerators or smaller inference chips instead. Even when a GPU is desirable, the operational realities of heat, space, and reliability can make it impractical.

There is also the software issue. GPUs require specialized programming environments, optimized kernels, and careful attention to precision, batching, and memory layout. That complexity can be worth it at scale, but it is still real. CPU software is generally easier to develop and deploy across diverse environments. GPU acceleration buys performance, but it also introduces a layer of optimization work that is not free.

The practical conclusion: GPUs are the AI engine, CPUs are the system of record

The reason GPUs beat CPUs for AI is straightforward once you reduce the hype: AI workloads are dominated by parallel math and memory throughput, both of which align with GPU architecture. CPUs are engineered for flexibility, not mass parallel execution. That makes them indispensable in the overall system, but not the best choice for the core compute of modern deep learning.

For buyers, architects, and operators, the useful question is not whether GPUs are “better” in the abstract. It is where the workload sits on the spectrum between parallel throughput and control-heavy logic, and what the surrounding infrastructure can support. In a large training cluster, the GPU is the engine that sets the pace. In a real production system, it is only one part of a chain that includes CPUs, networking, memory, storage, power delivery, and cooling.

That is the durable lesson of the AI hardware transition: GPUs did not win because they are universally superior. They won because modern AI turned out to be a compute problem that maps unusually well to what they were built to do. The limits now are not just in the silicon, but in how much infrastructure the rest of the stack can bring to bear.

Image: 6600GT GPU.jpg | Own work | License: CC BY-SA 3.0 | Source: Wikimedia | https://commons.wikimedia.org/wiki/File:6600GT_GPU.jpg