Inference, not training, is where AI becomes a product

When most people talk about AI, they are usually talking about training: the expensive, highly visible process of teaching a model by feeding it enormous amounts of data. Training is where the breakthroughs happen, and it is where the biggest GPU clusters are often deployed. But for many companies, the more important workload is inference.

Inference is the part of AI that happens after a model has already been trained. It is the stage where the model is put to work answering prompts, classifying images, recommending products, detecting fraud, transcribing speech, guiding robots, or generating text and video. In plain English: training builds the model, inference uses it. If training is the research and development phase, inference is the production line.

This distinction matters because the economics, infrastructure, and performance constraints are completely different. A company may train a model a handful of times a year, but it may run inference millions or billions of times a day. That makes inference the workload that determines whether AI is truly practical at scale.

What inference actually does

At a technical level, inference means running the model forward on new data. The model takes an input, processes it through its learned parameters, and produces an output. For a language model, that output may be the next token in a sentence. For a vision system, it may be a label or bounding box. For an industrial robot, it may be a control decision made in real time.

The key point is that inference is not guesswork in the casual sense. It is deterministic computation applied to probability. The model has already internalized patterns during training; inference applies those patterns to a live request. In practice, this can happen in a cloud data center, on a local server, at the edge, or directly on a device like a phone, camera, car, or factory controller.

Different applications demand different kinds of inference. A chatbot can tolerate a few hundred milliseconds of delay. An automated trading system cannot. A warehouse robot may need predictions with very low latency and high reliability. A recommendation engine may prioritize throughput over single-request speed. Once you start looking closely, inference is less like a single task and more like a broad class of production computing problems.

Why inference has become the center of the AI economy

The reason inference matters now is simple: deployment scale. Training a frontier model can cost millions of dollars and consume vast amounts of power, but that is often a one-time or occasional expense. Inference, by contrast, grows with users. Every new prompt, search query, chatbot session, image generation, fraud check, or voice transcription adds to the bill.

That changes the way companies think about AI. During the training boom, the bottleneck was access to large GPU clusters and enough high-speed networking to keep them busy. During the inference boom, the bottleneck shifts toward latency, serving efficiency, memory bandwidth, and cost per request. A model that is technically impressive but too slow or too expensive to serve at scale will struggle to become a business.

This is why the industry is obsessed with inference optimization. The goal is not just to make models smarter, but cheaper and faster to operate. A 2x gain in inference efficiency can be more valuable than a modest bump in model quality because it affects every request the system handles.

The hardware story: GPUs, accelerators, and memory pressure

Inference is often associated with GPUs, and for good reason. GPUs are excellent at parallel computation, which makes them well suited to the math inside neural networks. But inference does not stress hardware in exactly the same way as training. Training tends to demand massive floating-point throughput and long-running, tightly synchronized jobs. Inference can be more sensitive to memory capacity, memory bandwidth, batching efficiency, and response latency.

This is why inference has opened the door to a wider range of accelerators. Some workloads run best on GPUs. Others benefit from custom AI chips, inference-specific ASICs, or lower-power edge processors. The winning chip is not always the fastest on a benchmark; it is the one that delivers the best performance per watt and performance per dollar for a specific deployment pattern.

Memory is also a central constraint. Large models may require substantial VRAM or high-bandwidth memory to hold parameters and intermediate states. When memory runs short, systems may need to page data, split workloads, or reduce batch size, all of which hurt performance. Inference hardware design increasingly revolves around feeding the model fast enough to keep compute units busy without wasting energy or inflating latency.

Why software matters as much as silicon

Inference performance is not determined by chips alone. Software stacks—compilers, runtimes, schedulers, model servers, quantization tools, caching layers, and request routing systems—play a major role in what an AI system can actually deliver.

For example, batching multiple requests together can improve throughput, but it may add latency. Quantization can reduce memory use and speed up execution, but it may slightly affect accuracy. Caching can eliminate repeat work for popular prompts or repeated context, but only if the system is designed to exploit it. Even the way tokens are generated in a language model can change cost and speed dramatically.

In practice, inference is a systems-engineering discipline. The best deployments are usually not the result of one magic model or one magic chip. They are the result of careful balancing across compute, memory, networking, software optimization, and workload design. That is why infrastructure teams, not just model researchers, have become central to AI strategy.

The economics of serving AI at scale

Inference is where AI revenue either works or fails. A company can build a remarkable model, but if every customer interaction burns too much compute, margins collapse. This is especially important for consumer apps, copilots, search products, and enterprise tools that depend on high usage volumes.

The cost structure of inference tends to push companies toward one of three strategies. They can use larger, more capable models and absorb the expense as a premium feature. They can optimize relentlessly to serve more requests on less hardware. Or they can shrink the model through distillation, quantization, or routing systems that send requests to the smallest model capable of handling them.

That last approach is becoming more common. Many real-world products do not need a frontier model for every interaction. A lightweight model may answer simple questions, while a larger model handles more difficult ones. This tiered architecture is one of the clearest signs that inference is a mature systems problem, not just a model-quality problem.

Inference is also changing data center design

As inference workloads grow, data centers are being designed less like general-purpose server farms and more like specialized AI factories. Power delivery, cooling, rack density, networking, and storage all come under pressure when a cluster is built to serve large models around the clock.

Unlike training jobs, which may be scheduled in large bursts, inference infrastructure must stay responsive continuously. That means operators care about service-level objectives, tail latency, failover, and load balancing. They also care about where models run geographically. Some inference must stay close to users for speed. Some must remain on-prem for privacy or regulatory reasons. Some must be pushed to the edge because sending data to the cloud would be too slow or too costly.

This creates demand across the whole stack: more efficient chips, faster interconnects, higher-capacity memory, better cooling, and more sophisticated orchestration software. In that sense, inference is not just an AI software issue. It is an energy and infrastructure issue.

Why the future of AI may be decided by inference

The next phase of AI will not be defined only by who can train the biggest model. It will be defined by who can deploy useful intelligence reliably, cheaply, and at scale. That is inference’s real significance.

For businesses, inference determines whether AI features are profitable, responsive, and safe enough to ship. For chipmakers, it defines a huge and growing market segment with different requirements from training. For cloud providers and data center operators, it shapes demand for power, cooling, networking, and capacity planning. For end users, it determines whether AI feels instant and useful or sluggish and expensive.

In other words, inference is where AI stops being an R&D demonstration and starts behaving like infrastructure. That is why it matters so much. The models may be trained in a lab, but the value is created in production.

The bottom line

If training is the headline, inference is the business. It is the process that turns AI from a technical milestone into an operational system with real costs, real constraints, and real economic consequences. The companies that understand inference best will not just build smarter models—they will build the infrastructure to serve them efficiently.

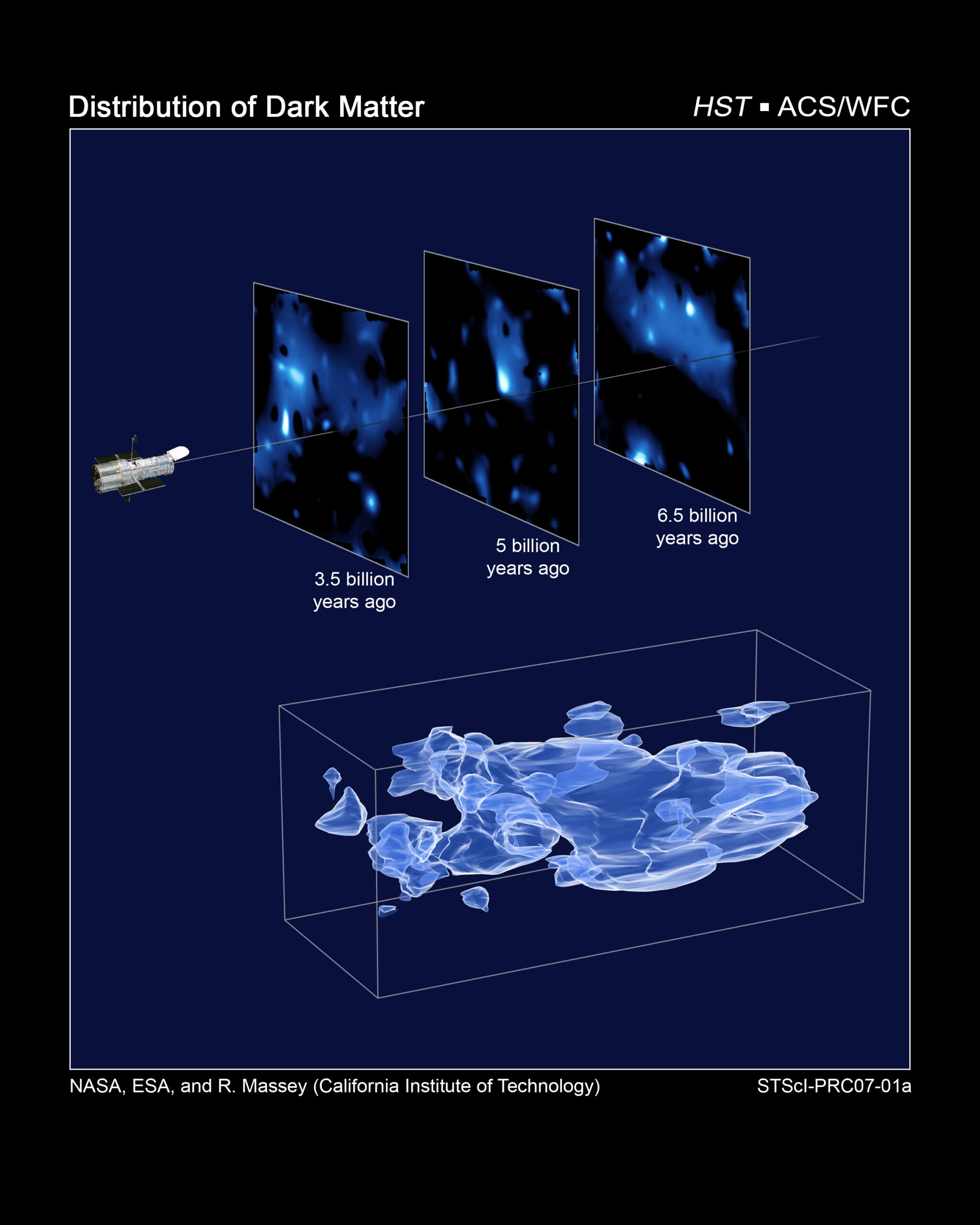

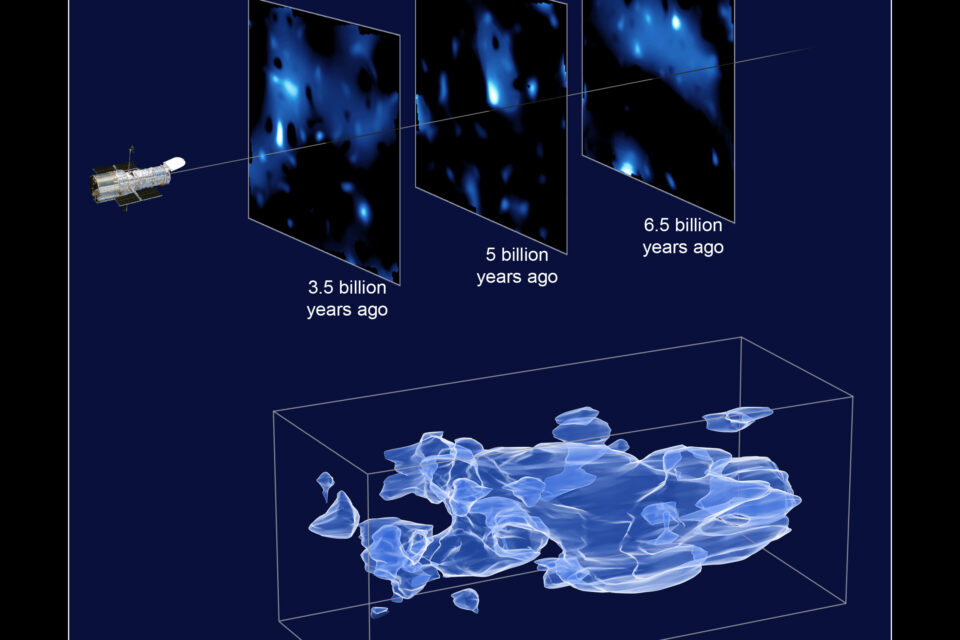

Image: Three-Dimensional Distribution of Dark Matter in the Universe (with 3 slices of time) (2007-01-2026).jpg | Three-Dimensional Distribution of Dark Matter in the Universe (with 3 slices of time) | License: Public domain | Source: Wikimedia | https://commons.wikimedia.org/wiki/File:Three-Dimensional_Distribution_of_Dark_Matter_in_the_Universe_(with_3_slices_of_time)_(2007-01-2026).jpg