Edge is not a trend line — it is a deployment strategy

Edge computing gets described as if it were a new layer in the cloud stack, but that undersells what is actually happening. In practice, it is a response to a simple constraint: not every digital task can afford the round trip to a distant data center. When milliseconds matter, when bandwidth is expensive, or when local resilience is more important than central control, compute moves closer to the source of the data.

That shift is changing infrastructure in a more concrete way than the buzz around “distributed intelligence” suggests. It is altering where servers are installed, how networks are designed, what kind of chips get deployed, and how operators think about power and cooling. The story is not edge versus cloud. It is cloud plus edge, with each architecture taking on the jobs it does best.

The clearest way to understand edge computing is to compare it with the centralized model that dominated the last decade. Hyperscale data centers are still unmatched for large-scale training, fleet management, storage aggregation, and elastic workloads. They benefit from concentration: shared power feeds, dense cooling systems, specialized networking, and economies of scale. Edge shifts the opposite direction. It trades some of those efficiencies for proximity, lower latency, and local survivability.

That tradeoff is not abstract. A factory robot that needs immediate vision processing cannot wait on a network hop to a region hundreds of miles away. A retail store using real-time inventory systems may need local processing to keep operating if connectivity drops. A telecom tower serving a dense urban area benefits from compute near the radio access network to reduce congestion and response time. In each case, edge exists because the cost of moving the data is higher than the cost of processing it locally.

The economics favor selective distribution, not universal decentralization

One of the biggest misunderstandings about edge is the idea that it means “putting a small data center everywhere.” That is neither practical nor necessary. Edge deployments are expensive to maintain because they fragment the operating model. Instead of a few large facilities with standardized staffing and predictable cooling, operators inherit many small sites with uneven environmental conditions, limited physical security, and more difficult servicing.

That is why edge makes sense only when the economics line up. The most compelling use cases are the ones where local compute reduces other recurring costs. In industrial settings, edge can cut downtime and improve automation. In telecom, it can reduce backhaul traffic. In video analytics, it can avoid shipping massive streams of raw footage into centralized cloud pipelines. In all of these cases, the savings are not just about latency. They are about bandwidth, storage, network transit, and operational continuity.

The architecture also changes how money is spent. Instead of buying one large facility every few years, organizations may deploy many smaller nodes across branch offices, factories, retail locations, towers, and local aggregation points. That creates a different capital profile: more modular hardware, more remote management tools, and more emphasis on standardization. The winners in this market are not simply the companies with the fastest processors. They are the ones that can ship resilient, power-efficient systems that can be installed and managed at scale.

Semiconductors and power efficiency now matter as much as raw performance

Edge computing changes the chip conversation in a meaningful way. In a hyperscale environment, operators can justify high-density accelerators, large shared cooling systems, and specialized networking because they are amortized across enormous workloads. At the edge, power envelopes are tighter, and thermal design is less forgiving. That pushes demand toward more efficient CPUs, low-power GPUs, purpose-built AI accelerators, and integrated systems that can deliver useful throughput without requiring a heavy infrastructure buildout.

This is especially important as AI inference moves outward from centralized training clusters. Training large models still belongs in major data centers, where the compute, power, and cooling budgets are easier to concentrate. But inference is increasingly deployed where the data is generated. That may be an on-premises server in a warehouse, a ruggedized system in a vehicle, or a compact rack at a telecom edge site. The technical requirement is not maximum scale; it is acceptable performance within a constrained power and heat budget.

That constraint is reshaping procurement decisions. Buyers are evaluating not just FLOPS or TOPS, but watts per useful workload, reliability under environmental stress, and ease of remote orchestration. Edge infrastructure rewards systems that can run unattended, update securely, and degrade gracefully. As a result, semiconductor roadmaps increasingly intersect with product categories that were once considered niche: industrial PCs, embedded AI modules, edge servers, and specialized networking silicon.

Network architecture is becoming a cost center again

For years, cloud architecture encouraged a mental model where compute was “somewhere out there” and the network was just the plumbing. Edge computing reverses that assumption. Once workloads are distributed, the network becomes central to both performance and economics. The question is no longer only how much compute is available. It is how much data must move, how often, and at what cost.

This is why edge is tightly linked to 5G, fiber densification, Wi-Fi offload, and private networks. If a workload is sensitive to latency but also generates large volumes of data, it may be more efficient to process that data locally and transmit only the result. A camera system that detects anomalies, for example, does not need to stream every frame to a remote region if the edge node can flag events in real time. That reduces bandwidth demand and improves response time simultaneously.

For operators, this creates a more layered topology. Some compute remains centralized for storage, analytics, and orchestration. Some sits at regional hubs. Some is pushed all the way to the site. The result is a hierarchy rather than a binary choice. And because every tier has different power, cooling, and uptime requirements, infrastructure planning becomes more complicated — but also more efficient when done well.

Energy and resilience are now part of the business case

Edge computing is also changing infrastructure because it reopens questions about resilience and energy use. Large data centers are efficient per unit of compute, but they concentrate demand on the grid. Edge sites distribute that load. In some cases, that helps avoid pressure on a single location. In others, it means many small facilities with less efficient cooling and backup systems. The energy story is therefore mixed: edge can reduce network energy and avoid overprovisioning, but it can also introduce duplication and operational overhead.

The practical advantage is resilience. Distributed compute can keep local systems running during outages, network disruptions, or upstream cloud failures. That matters for manufacturing, logistics, healthcare, retail, and utilities — sectors where a temporary loss of connectivity has real cost. Infrastructure is no longer judged only by how much throughput it can deliver under ideal conditions. It is also judged by how well it performs when the ideal conditions disappear.

This is where power infrastructure becomes inseparable from compute strategy. Edge deployments often rely on tighter integration with UPS systems, backup generation, local batteries, and increasingly, site-level energy management. The most durable deployments are the ones that treat compute and power as a single design problem. A low-latency system that fails during a brownout is not a good system. The deployment has to survive the real-world conditions in which it operates.

The winning model is hybrid, but hybrid requires discipline

Edge computing is not replacing data centers. It is forcing a more honest division of labor between centralized and local infrastructure. Hyperscale clouds still dominate training, large analytics, long-term storage, and global control planes. Edge handles immediacy, locality, and resilience. The challenge is making those layers work together without creating operational chaos.

That means standard hardware footprints, unified management software, strong security policies, and clear workload placement rules. It also means resisting the temptation to deploy edge everywhere simply because the technology exists. The best edge strategies are selective. They place compute where it creates measurable value, not where it merely sounds modern.

For infrastructure builders, the opportunity is significant. Edge opens demand for compact servers, efficient accelerators, remote observability tools, rugged networking gear, and new classes of power-aware systems. For enterprises, it offers a way to reduce latency and bandwidth costs while improving operational continuity. For the broader ecosystem, it signals a future in which compute is less centralized, more situational, and more tightly coupled to the physical world.

That is the real change. Edge computing is not just moving servers around. It is changing the geography of digital infrastructure — and in doing so, changing the economics of where compute belongs.

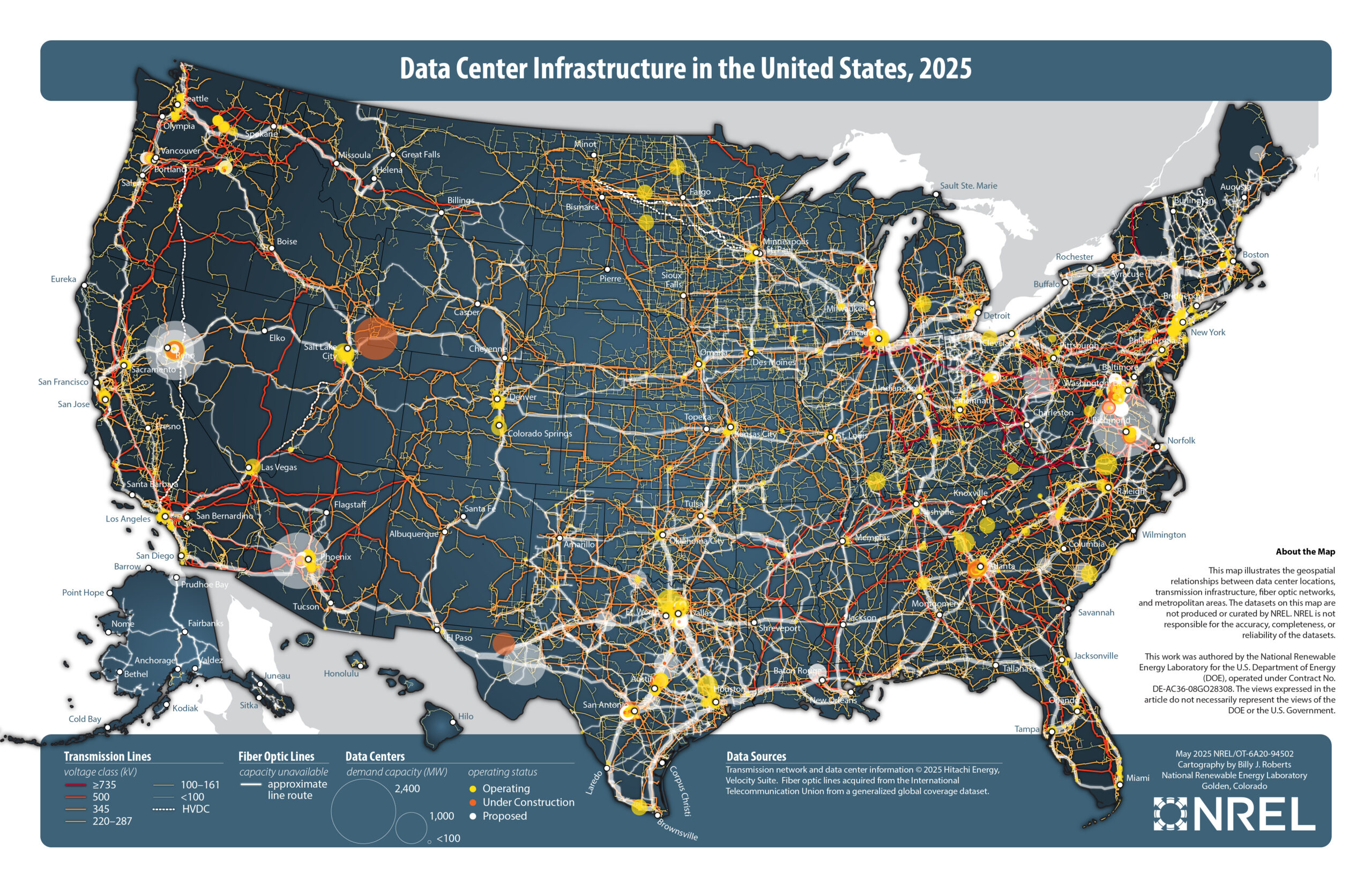

Image: Data center infrastructure in the United States.jpg | https://research-hub.nrel.gov/en/publications/data-center-infrastructure-in-the-united-states-2025-map/ | License: Public domain | Source: Wikimedia | https://commons.wikimedia.org/wiki/File:Data_center_infrastructure_in_the_United_States.jpg