Two very different ways to build behavior

Traditional software and machine learning can look similar from the outside. In both cases, developers write code, deploy it to servers or devices, and expect it to produce a result. But the mechanism underneath is fundamentally different.

Traditional software is built from explicit instructions. A developer defines the rules: if X happens, do Y. The program behaves according to logic the team designed ahead of time. Machine learning, by contrast, is built to discover rules from examples. Instead of encoding every decision manually, engineers provide data and an objective, and the system learns a pattern that approximates the desired behavior.

That difference changes everything: how the system is built, how it fails, how it is tested, and what kind of infrastructure it needs.

Traditional software is explicit; machine learning is statistical

The clearest distinction is this: traditional software is deterministic, while machine learning is probabilistic.

In conventional software, the same input should produce the same output every time, assuming the environment is unchanged. If a billing system calculates tax, the formula is written directly into code. If a navigation app chooses a route, the logic is still built from explicit rules, graphs, and optimization steps written by engineers.

Machine learning systems do not usually work that way. They estimate. They assign probabilities, scores, or classifications based on patterns seen during training. A spam filter does not “know” an email is spam in the human sense; it has learned that certain combinations of words, links, sender behavior, and message structure are often associated with spam.

This statistical nature is both the strength and the limitation of ML. It allows systems to handle messy, high-dimensional problems where exact rules are hard to write. But it also means the output is never just a direct expression of developer intent. It is an approximation shaped by data.

Why machine learning exists at all

If traditional software is so precise, why not use it for everything?

Because many real-world problems are too complex, too variable, or too ambiguous for hand-written rules. Consider image recognition. A conventional program can detect a pixel pattern or a geometric shape, but identifying a cat across lighting conditions, camera angles, breeds, and backgrounds is not a simple rules problem. The same is true for speech recognition, fraud detection, recommendation systems, predictive maintenance, and many robotics tasks.

In these cases, the useful rule is not obvious enough to write down. Machine learning lets the system infer that rule from examples. Show it enough labeled images of cats and not-cats, and it can learn a decision boundary that performs well on new images it has never seen. The same logic applies to predicting which transactions are suspicious or which machine component is likely to fail soon.

In other words, ML is less a replacement for software engineering than a tool for domains where software cannot practically be written as a full set of explicit instructions.

Different inputs, different development cycle

Traditional software development starts with requirements and logic design. Machine learning starts with data.

That sounds simple, but it shifts the whole workflow. In a normal software project, the main engineering task is to translate business rules into code. In ML, the main task is often to assemble a useful dataset, clean it, label it, choose features or model architecture, train the system, and measure whether it generalizes beyond the training set.

This is why ML teams spend so much time on data quality. Bad data does not just make the system a little worse; it can bake mistakes directly into the model. Missing examples, biased labels, stale records, or data collected under the wrong conditions can all distort learning.

That also means iteration looks different. Traditional software bugs are usually traced to a specific line or condition in code. ML failures may come from the dataset, the training objective, the model architecture, or the mismatch between training conditions and real-world conditions. Fixing the problem can require collecting new data, retraining, and revalidating the model, not just editing a function.

Debugging code versus debugging behavior

Engineers often say traditional software is easier to debug because its logic is inspectable. If a payment system charges the wrong amount, you can trace the calculation, inspect the conditionals, and identify the fault. The reasoning path is visible.

Machine learning systems are harder to debug because the reasoning is distributed across weights, parameters, and learned representations. Modern models can be remarkably effective, but they rarely offer a human-friendly explanation of why a particular prediction was made. A vision model might classify a defective part correctly without giving a satisfying explanation of which visual cues mattered most.

That opacity matters in regulated or high-stakes environments. In healthcare, finance, industrial automation, and autonomous systems, “it seems to work” is not enough. Teams need testing frameworks, observability, confidence thresholds, fallback logic, and audit trails. In practice, many production ML systems are wrapped with traditional software controls precisely because the model itself is not fully transparent.

Generalization is the point—and the problem

Traditional software is expected to behave exactly as written. Machine learning is expected to generalize.

Generalization means the model should perform well on new data, not just the data it saw during training. This is why ML development involves a train-test split, validation sets, and performance metrics like precision, recall, F1 score, or mean absolute error. The goal is not to memorize examples but to learn a pattern that holds up in the wild.

But generalization is fragile. If the real world shifts, the model can degrade without the code changing at all. This is called model drift or data drift. A fraud model trained on last year’s transaction patterns may become less effective as user behavior changes. A manufacturing defect detector trained on one camera setup may fail when lighting or angle changes. Traditional software can also break when environments change, but ML is especially vulnerable because the world itself is part of the model’s operating assumption.

The hardware story: ML changes the compute stack

Another major difference is computational scale. Traditional software often runs efficiently on general-purpose CPUs. Machine learning, especially modern deep learning, is computationally expensive in training and increasingly demanding in inference at scale.

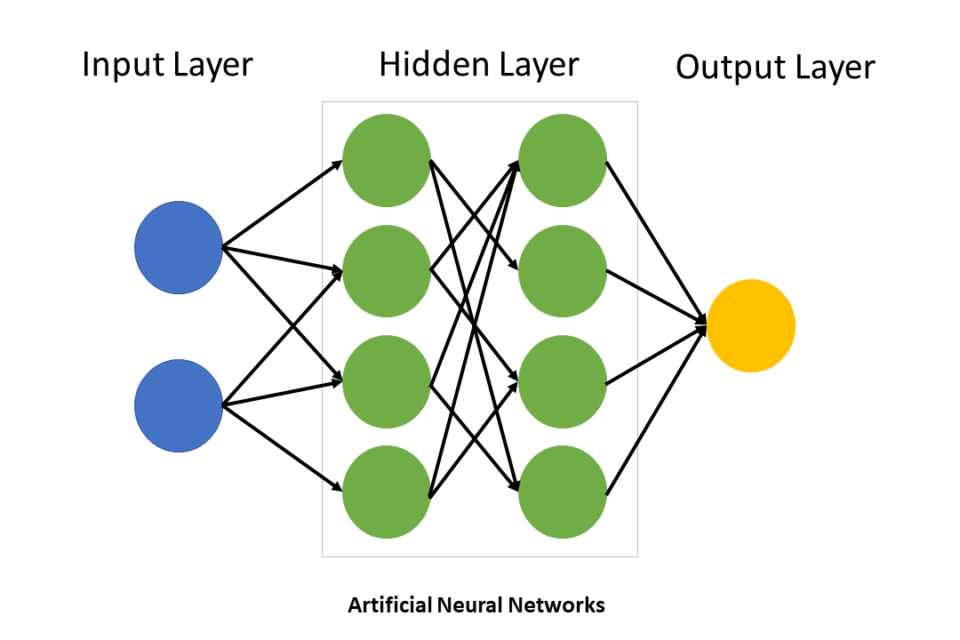

Training large models requires massive matrix math, which is why GPUs, accelerators, and distributed systems became central to the AI stack. Instead of executing a set of branches and routines, ML training performs repeated numerical operations across large tensors. That workload maps extremely well to parallel hardware. The result is a different infrastructure footprint: clusters of GPUs, fast interconnects, high-bandwidth memory, storage systems for large datasets, and energy-intensive data centers.

This hardware dependency matters because it changes the economics of software. A traditional application can often be improved mainly through engineering effort. An ML system may also require more compute, more memory bandwidth, and more power to reach acceptable accuracy or latency. In other words, model performance is not just a code problem; it is a systems problem.

What stays the same: software discipline still matters

Despite all these differences, machine learning is not magic. It is still software, and it still depends on good engineering discipline.

Version control, testing, deployment pipelines, monitoring, security review, and rollback procedures still matter. In fact, they matter more because ML introduces new failure modes. A model can degrade silently. A data pipeline can introduce leakage. A retrained model can behave differently from the previous version even if the code is unchanged.

That is why mature ML teams treat the model as one component inside a larger system. They combine learned behavior with ordinary code for validation, business rules, guardrails, and user experience. In many production applications, machine learning handles the uncertain part of the problem while conventional software handles the deterministic edges.

The practical takeaway

The best way to think about machine learning is not as a smarter version of traditional software, but as a different engineering strategy.

Traditional software is ideal when the rules are known, stable, and important to get exactly right. Machine learning is useful when the rules are difficult to specify but examples are plentiful. One is built by encoding logic. The other is built by extracting logic from data.

That distinction explains why ML brings new requirements around data quality, compute, monitoring, and drift management. It also explains why the most effective systems in production often blend both approaches: deterministic software for control, machine learning for prediction.

For readers trying to make sense of the AI era, this is the key mental model. Machine learning is not replacing software engineering. It is expanding the places where software can work at all—and forcing the rest of the stack, from chips to data centers, to adapt around it.

Image: AI Experience at Universal Ai University.jpg | Own work | License: CC0 | Source: Wikimedia | https://commons.wikimedia.org/wiki/File:AI_Experience_at_Universal_Ai_University.jpg