The New Education Bargain

Artificial intelligence is entering education at a moment when school systems are under strain from every direction. Teachers are overloaded, administrators are drowning in paperwork, students are arriving with wider skill gaps, and budgets are tight. In that environment, AI looks less like a futuristic experiment and more like a practical pressure valve. It can generate lesson materials, summarize student progress, draft administrative communications, and provide round-the-clock tutoring. It can also widen existing inequalities, weaken trust in assessment, and shift decision-making toward tools that schools do not fully understand.

That is the central tradeoff. AI may help education systems do more with less, but “more” is not the same as “better.” The question is not whether AI will enter classrooms and school districts. It already has. The real question is what kind of education system emerges when software begins to absorb tasks once performed by teachers, support staff, and curriculum specialists.

What AI Actually Changes Inside a School

Most of the public conversation focuses on student use cases: chatbots for homework help, writing assistants, or adaptive tutoring apps. Those tools matter, but the bigger system-level change is operational. Schools are labor-intensive institutions, and many of their bottlenecks are administrative rather than pedagogical. Attendance tracking, parent communication, scheduling, report writing, translation, and document review consume time that could otherwise go toward instruction and student support.

AI tools can reduce some of that burden. A district might use generative AI to draft individualized emails in multiple languages. Teachers can use it to create differentiated reading passages or quiz questions at various difficulty levels. Counselors can triage large volumes of student notes. Special education teams can summarize meeting records and align paperwork more efficiently. In theory, these gains free humans to do the parts of education that still require judgment, empathy, and relationship-building.

But implementation is where the friction begins. Schools are not software companies. They operate on procurement cycles, union contracts, privacy rules, and state standards that move slowly. A tool that works well in a pilot can fail at scale because it does not integrate with student information systems, is too expensive to license district-wide, or creates legal exposure around data handling. In education, the last mile is often not technical capability but institutional readiness.

Personalization Has Real Upside — and Real Limits

AI’s strongest promise in education is personalization. A student who is behind in algebra does not need a generic worksheet; they need targeted practice at the right level, with feedback delivered quickly enough to correct errors before they harden into habits. A multilingual learner may need explanations in simpler language, while an advanced student may need more challenging material than the classroom pace allows. In principle, AI can help supply this variation at scale.

That matters because one of the persistent failures of mass education is that it treats students as if they learn on the same schedule, in the same format, with the same starting point. Human teachers know this is false. The problem is not awareness; it is capacity. One teacher cannot individually tutor 30 students all day. AI can partially fill that gap.

Still, personalization has limits. AI systems are only as good as the content, prompts, constraints, and feedback loops behind them. They can produce plausible explanations that are wrong, overly confident, or misaligned with a curriculum. They may reinforce a student’s misconceptions if they optimize too aggressively for engagement or short-term performance. And they can tempt districts to substitute machine-generated practice for the harder work of curriculum redesign and teacher development.

The most useful framing is not that AI will replace instruction, but that it will change the economics of support. Routine feedback becomes cheaper. High-quality tutoring becomes more accessible. Yet the core job of education — building durable understanding, habits of thinking, and social competence — still depends on human oversight.

The Assessment Problem Is Going to Get Harder

If AI changes teaching, it changes grading even more. Education systems have long depended on written assignments, take-home essays, and problem sets as signals of student learning. Generative AI breaks the assumption that submitted work is necessarily evidence of the student’s own thinking. That creates a downstream crisis in assessment.

Some institutions have responded by trying to detect AI-generated text. That approach is fragile. Detection tools are unreliable, and an arms race between generators and detectors is a poor foundation for policy. A more durable response is to redesign assessment around in-class writing, oral defense, project-based work, and process documentation that shows how a student arrived at an answer, not just what answer was submitted.

That shift is pedagogically healthy, but it is also labor-intensive. Teachers need more time to evaluate projects and less reliance on easy-to-grade assignments. Schools need better assessment design. Universities, especially, may have to rethink the relationship between coursework, credentialing, and real competence. In other words, AI does not just create a cheating problem. It exposes how much of formal education has depended on low-cost proxies for learning.

Equity Will Depend on Procurement, Not Just Ideals

The biggest myth about AI in education is that access will naturally democratize learning. In reality, access is shaped by money, infrastructure, and implementation quality. Well-funded districts can buy secure platforms, train staff, negotiate data protections, and integrate tools into existing workflows. Under-resourced schools may get free consumer-grade tools with weaker privacy safeguards and less instructional support.

That matters because educational inequality is not only about test scores. It is also about which students get access to better feedback, better materials, and better-adapted systems. If AI tutoring and administrative automation are deployed unevenly, they could widen the gap between districts with strong capacity and those already struggling to keep up.

Language access is a useful example. AI translation can dramatically improve communication with families who do not speak the district’s primary language. That is a real gain. But if translation quality is inconsistent, or if schools fail to verify critical communications, the result can be confusion at precisely the point where trust matters most. Equity is not just about availability; it is about reliability.

Teachers Are Not the Bottleneck to Ignore

Policymakers and vendors sometimes speak as if AI adoption is mainly a software rollout. It is not. It is a labor transition. Teachers will be asked to use new tools, adapt lesson plans, monitor student use, and judge where AI is helpful versus harmful. That requires training, time, and clear boundaries.

Without that support, AI risks becoming one more top-down mandate that increases workload instead of reducing it. A district may purchase a platform promising efficiency but fail to fund the professional development needed to make it useful. Or it may introduce AI while leaving teachers responsible for spotting misuse, correcting model errors, and preserving academic integrity. In that scenario, the technology doesn’t lighten the load; it shifts it.

The most successful deployments are likely to be modest and specific rather than sweeping. Think carefully bounded use cases: draft feedback, practice generation, translation, scheduling, and internal documentation. These are the areas where the return on deployment is clearest and the risk is more controllable.

Policy Is Lagging Behind the Market

The market for AI in education is moving faster than the regulatory framework around it. Edtech vendors are racing to add AI features because districts want productivity gains and parents want evidence that schools are adapting. Meanwhile, policymakers are still trying to define basic rules around student data, model transparency, age-appropriate use, and accountability when systems fail.

That lag is not unusual. Public institutions often adopt technology only after the market has already normalized it. But education is uniquely exposed because its users are minors, its outcomes are long-term, and its failures are hard to reverse. A poor recommendation in retail is annoying. A bad recommendation in a school district can affect learning trajectories for years.

What schools need is not a blanket ban or a blank check. They need procurement standards that require clear data policies, evidence of instructional value, human override, and accessibility requirements. They need guidance on when AI can assist and when it should never make a final decision. And they need better measurement of whether these tools actually improve outcomes rather than merely reduce visible labor.

The Real Endgame: A More Adaptive System, or a Cheaper One?

AI could help education become more adaptive, more responsive, and less dependent on one-size-fits-all instruction. It could also be used to cut staffing, standardize teaching, and substitute automation for investment. Those are not the same future.

The difference will come down to governance. If AI is deployed as an efficiency tool alone, schools may save time but erode trust. If it is deployed as an augmentation layer for teachers and students, with strong rules around privacy, assessment, and equity, it can become a genuine force multiplier.

That is the real downstream consequence of AI in education. The technology is not just changing what students learn. It is forcing school systems to decide what kind of institution they want to be: one optimized for cost, or one built to improve human capability at scale. In the long run, that choice will matter more than any single chatbot, tutor, or grading tool.

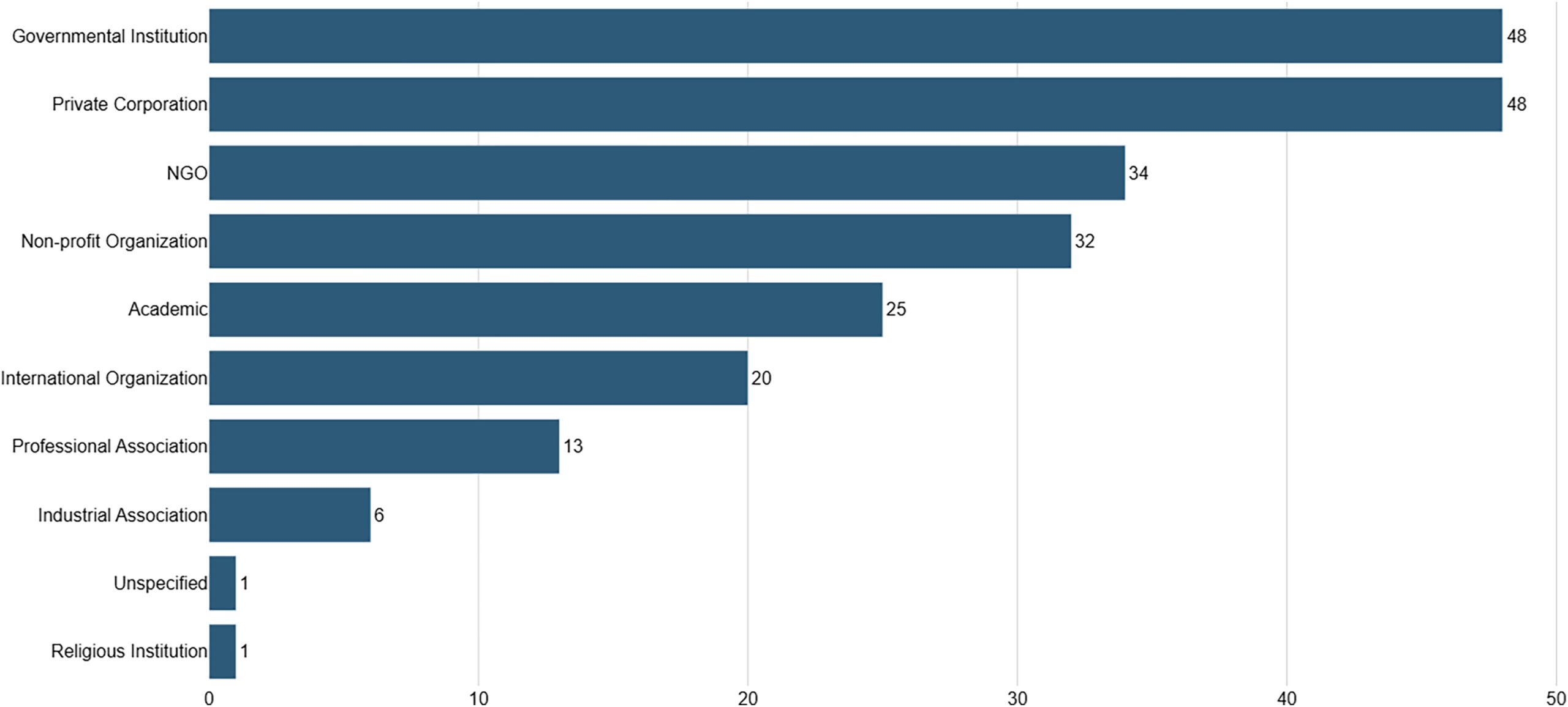

Image: AI governance guideline publications by institution types.jpg | https://www.cell.com/patterns/fulltext/S2666-3899(23)00241-6 | License: CC BY 4.0 | Source: Wikimedia | https://commons.wikimedia.org/wiki/File:AI_governance_guideline_publications_by_institution_types.jpg