What a hyperscale data center actually is

A hyperscale data center is a facility built to support enormous, rapidly expandable computing loads with a high degree of standardization and automation. The term usually refers to the infrastructure used by the largest cloud and internet companies—think Microsoft, Google, Amazon, Meta, and a small number of other operators with similarly massive footprints. These facilities are not simply “big data centers.” They are designed from the ground up to add capacity in repeatable blocks, deploy hardware at industrial scale, and keep operations efficient even as power draw climbs into the tens or hundreds of megawatts.

The important distinction is not just size. Plenty of enterprises run large data centers. Hyperscale implies a specific operating model: tightly integrated power, cooling, networking, and software management, all optimized for dense fleets of identical or near-identical servers. That uniformity matters because it lowers the cost per unit of compute and makes it possible to roll out thousands of machines in a coordinated way.

Why hyperscale is an industrial model, not a product category

The phrase “hyperscale data center” can sound like a marketing label, but the underlying economics are industrial. These facilities behave more like factories than office IT rooms. They consume large volumes of electricity, depend on elaborate supply chains for transformers and switchgear, and require careful planning around land, water, grid interconnection, and fiber access. In many regions, the limiting factor is no longer demand for compute; it is whether the local power system can physically support the load.

This is why hyperscale has become a constraint story. Companies that want to train large AI models or serve global cloud workloads at low latency need power, cooling, and network capacity at a scale that cannot be improvised. The bottleneck is often upstream of the servers themselves. A warehouse full of GPUs is useless if the substation is delayed, the utility interconnect is capped, or the cooling design cannot handle the thermal density.

How hyperscale compares with colocation, enterprise, and edge

To understand hyperscale, it helps to compare it with other deployment paths.

Enterprise data centers are typically built for one organization’s internal workloads. They may be substantial, but they are usually managed with more customized hardware, lower standardization, and less appetite for the relentless expansion that characterizes hyperscale operations. They are often built to support business applications, databases, and internal services rather than massive public cloud platforms.

Colocation facilities rent space, power, and connectivity to tenants. They can scale impressively and are critical to the broader internet infrastructure, but the operator is usually not designing the entire stack around a single fleet of homogeneous servers. Colo works well when companies want flexibility, geographic reach, or to avoid building their own facilities. Hyperscalers, by contrast, usually prefer ownership or highly customized leasing because they want architectural control and cost efficiency at very large volumes.

Edge deployments place compute closer to users or devices, reducing latency for time-sensitive tasks. They are useful for video delivery, industrial automation, retail systems, and some AI inference workloads. But edge sites are not a replacement for hyperscale. They are a complement. The heavy lifting—training models, storing massive datasets, running broad cloud services—still tends to happen in centralized hyperscale campuses.

The tradeoff is simple: hyperscale wins on efficiency and control at enormous scale; colocaton wins on flexibility and speed to market; edge wins on proximity and latency. Most modern compute stacks use some combination of all three.

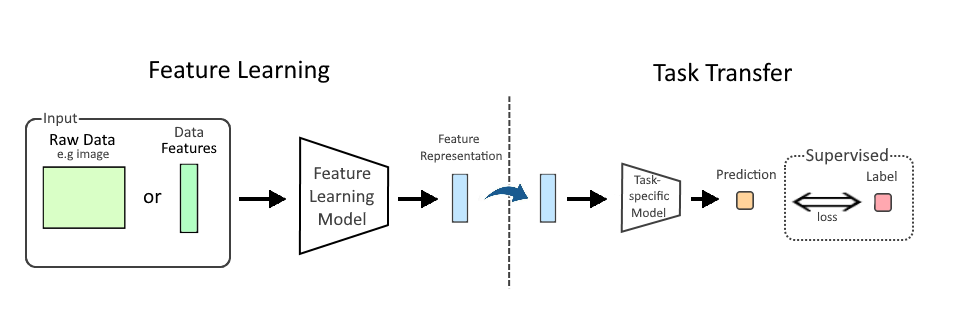

The hardware stack inside a hyperscale campus

At the server level, hyperscale data centers are increasingly defined by accelerated computing. Traditional CPU-centric racks still matter, but AI training and high-performance cloud services have pushed GPUs, custom accelerators, and high-bandwidth interconnects to the center of the design. That changes the physical plant. GPU clusters draw more power per rack, produce more heat, and depend on low-latency networking to keep expensive chips busy.

As a result, the facility is no longer just a place to plug in servers. It is an engineered system that has to balance power distribution, thermal management, rack density, and fault tolerance. Advanced liquid cooling, rear-door heat exchangers, direct-to-chip cooling, and carefully segmented power architectures are becoming more common because air cooling alone is often not enough for modern AI loads.

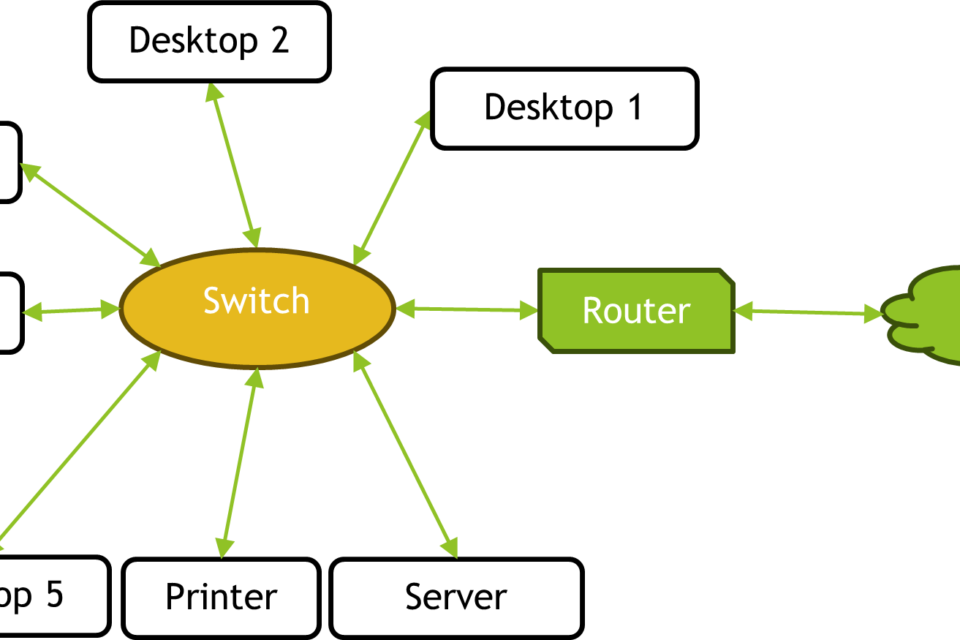

The network is equally important. Hyperscale operators rely on high-radix switching, leaf-spine topologies, and software-defined networking to move data efficiently between machines, storage, and service tiers. When you scale out to thousands of nodes, network design becomes a core performance variable, not just plumbing.

Why power, not floor space, is the real scarcity

People often imagine data centers as real-estate problems. In hyperscale, the more important constraint is power density. A modern facility can occupy a very large physical footprint, but the decisive metric is how many megawatts it can receive, distribute, and remove as heat. That is why utility access, transmission capacity, and substation timelines have become strategic issues for cloud providers and AI builders.

This has changed site selection. Operators now look for regions with available grid capacity, favorable permitting, access to renewable generation or long-term power contracts, and enough water or cooling flexibility to support the thermal load. In some cases, companies pursue on-site generation, battery storage, or even behind-the-meter arrangements to reduce grid dependency. These are not fringe decisions; they are responses to the reality that compute growth is increasingly bounded by energy infrastructure.

What hyperscale does well, and where it creates friction

Hyperscale architecture has clear advantages. It drives down unit costs through standardization. It supports rapid software updates and fleet-wide automation. It enables global services that can absorb huge demand spikes without building bespoke infrastructure for every deployment. For cloud customers, that means access to elastic compute, storage, and AI capacity on demand.

But the model has costs. Hyperscale facilities concentrate risk: outages, supply-chain disruptions, or energy shortages can ripple through large user populations. They can also intensify local debates over land use, water use, grid reliability, and community impact. And because the biggest operators design around their own software and hardware preferences, hyperscale is not always the best fit for companies that need specialized legacy systems or regulatory isolation.

There is also a strategic tradeoff between centralization and resilience. Centralized compute is efficient, but it can create dependency on a relatively small number of locations and operators. That is one reason many organizations are now blending hyperscale cloud with colo, regional sites, and edge infrastructure rather than treating one model as universally superior.

Why hyperscale matters more in the AI era

AI has made hyperscale more visible because model training and large-scale inference consume exactly the resources these campuses are built to deliver: dense power, fast networking, and industrialized operations. Training frontier models can require thousands of GPUs synchronized across large clusters, which means the facility must be designed for both compute density and communication efficiency.

That does not mean every AI workload belongs in hyperscale. Smaller models, private deployments, latency-sensitive inference, and regulated workloads may sit better in regional clouds, enterprise environments, or specialized on-prem systems. But the frontier of AI infrastructure is clearly pushing hyperscale toward higher power densities, more complex cooling, and tighter coupling with the energy sector.

The practical takeaway

A hyperscale data center is best understood as a large-scale compute factory: standardized, power-hungry, software-managed, and optimized to turn electricity into digital services as efficiently as possible. Its significance is not that it is merely bigger than other data centers, but that it sits at the center of a new industrial stack linking semiconductors, networking, cooling, and energy infrastructure.

For businesses, the choice among hyperscale, colo, enterprise, and edge is really a choice among different tradeoffs: control versus flexibility, efficiency versus proximity, and scale versus resilience. For the industry, the rise of hyperscale means the hard constraints are shifting upward—from servers and racks to grid capacity, cooling design, and long-term energy planning. That is why the future of compute is increasingly being decided outside the server room.

Image: BalticServers data center.jpg | Self-photographed | License: CC BY-SA 3.0 | Source: Wikimedia | https://commons.wikimedia.org/wiki/File:BalticServers_data_center.jpg