The promise is real, but the stack is unforgiving

AI and robotics are often discussed as if they are naturally fusing into one seamless category. In practice, they solve different problems. AI is good at interpretation, prediction, and decision-making under uncertainty. Robotics is good at acting in the physical world, where inertia, friction, power limits, sensor noise, and safety rules all matter. Put them together and you get systems that can see, plan, and move with far more flexibility than traditional automation. But you also get a stack with hard constraints that software-only AI products never face.

That difference matters because the bottlenecks are not abstract. A language model can tolerate a bit of latency. A warehouse robot avoiding a pallet jack cannot. A vision system can make a guess about an object class. A robotic arm picking up a fragile package needs millimeter-level repeatability and low-jitter control. The closer a machine gets to the physical world, the less useful broad claims about “AI capability” become and the more important the underlying infrastructure becomes.

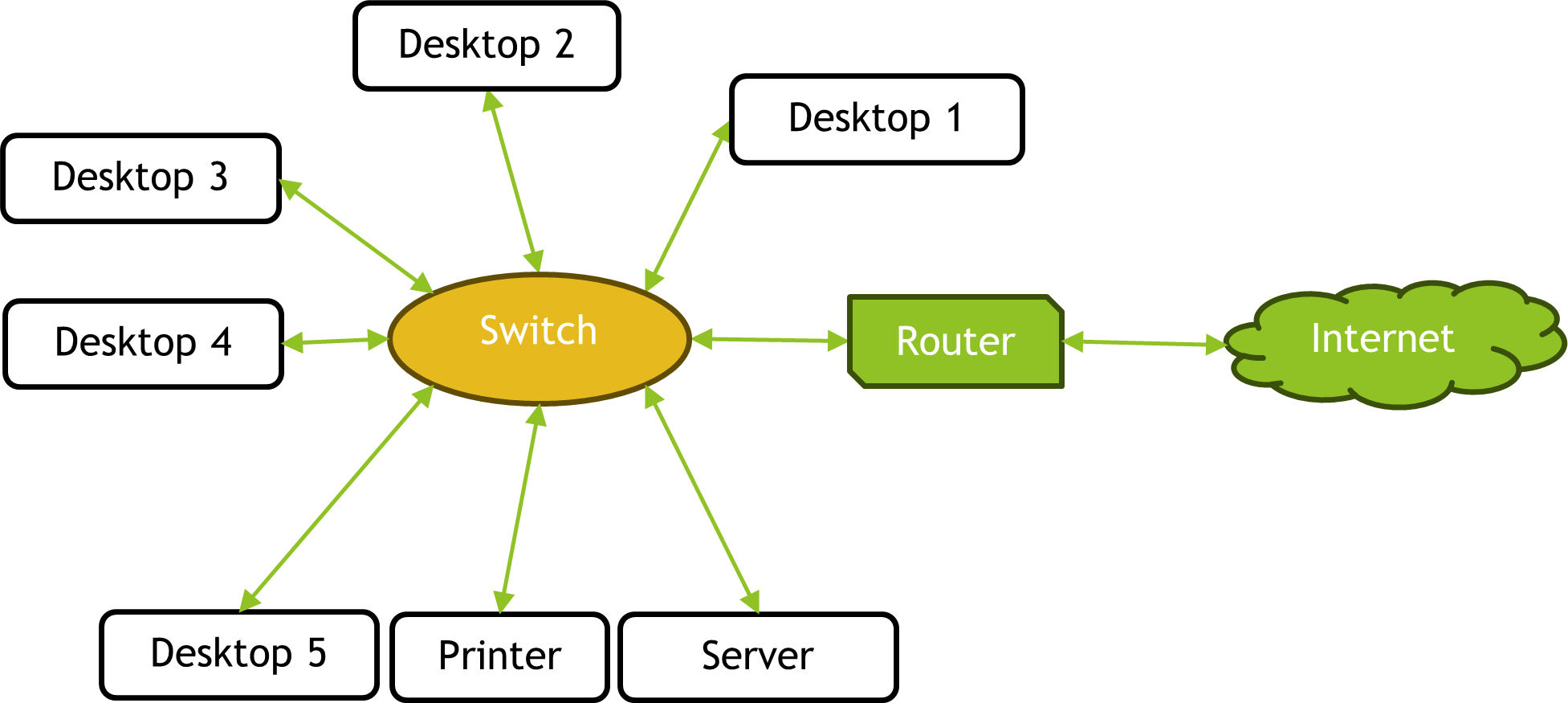

Three layers, three very different jobs

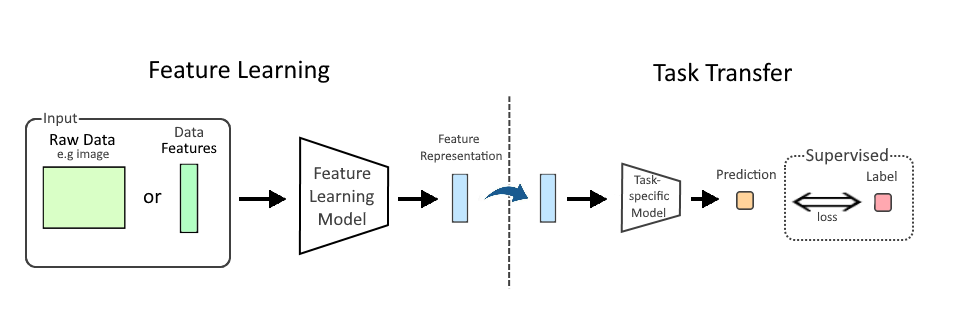

Most modern robotic systems can be understood as three layers: perception, planning, and control. AI has transformed the first two, while the third remains stubbornly physical.

Perception turns sensor input into meaning. Cameras, depth sensors, LiDAR, tactile sensors, and force sensors all generate noisy data. Machine learning models help robots identify objects, estimate pose, track motion, and understand scene context. This is where AI has delivered some of its clearest gains, especially in unstructured environments like warehouses, farms, labs, and retail back rooms.

Planning turns perception into a sequence of actions. This may involve route planning, grasp selection, task scheduling, or dynamic obstacle avoidance. Here, AI is useful because the real world rarely presents a single correct answer. There are tradeoffs among speed, safety, energy use, and success probability. Modern systems can evaluate many more possibilities than classic rule-based automation.

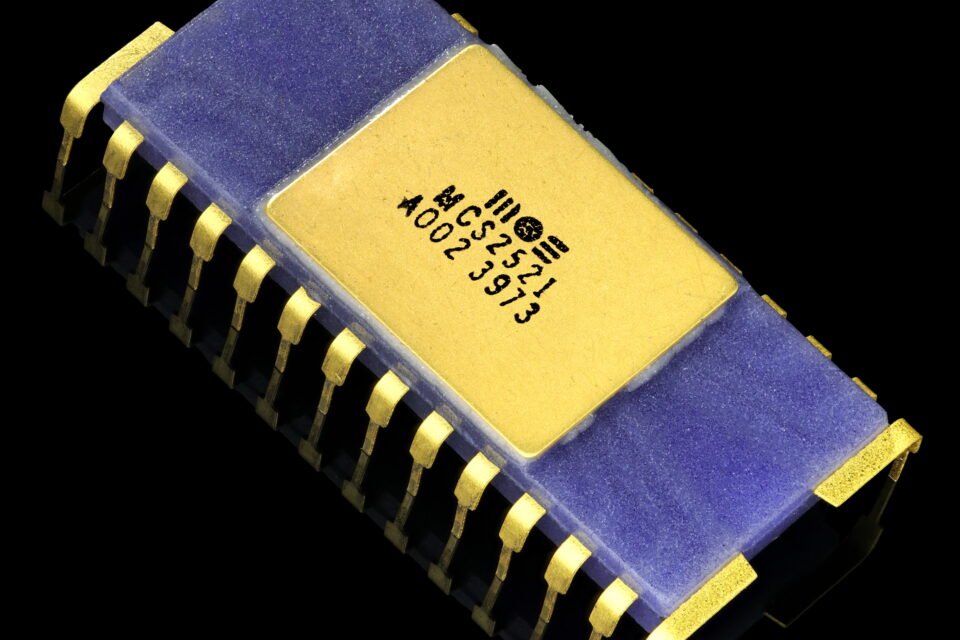

Control is where theory meets hardware. Motors, actuators, gearboxes, batteries, drive electronics, and embedded controllers have to execute commands within tight bounds. If perception is wrong or delayed, the robot can fail. If control loops are unstable, the robot can shake, miss, or damage itself. AI can inform control, but it does not replace the physics.

The deployment choice: cloud, edge, or hybrid

The most important architecture question is not whether to use AI, but where to run it. That choice determines latency, cost, reliability, and scalability.

Cloud-centered robotics pushes more inference and fleet coordination into remote data centers. This can be attractive for training, logging, model updates, and long-horizon planning. The advantage is centralized compute: powerful GPUs, large datasets, and easier iteration. The downside is obvious. Physical systems dislike round-trip network delay, connectivity loss, and variable bandwidth. A robot operating in a busy factory or hospital cannot depend on the cloud for every critical decision.

Edge-centered robotics keeps inference on the machine or nearby on-premise hardware. This improves responsiveness and resilience. It also reduces data transfer costs and can simplify privacy-sensitive deployments. The tradeoff is hardware limits. Onboard compute is constrained by power, heat, size, and weight. Every watt matters, especially in mobile robots. Edge systems therefore force model compression, quantization, pruning, and careful scheduling. The result is often less model capacity than teams want, even if it is the right engineering choice.

Hybrid architectures are emerging as the default in serious deployments. The robot performs time-sensitive perception and control locally, while the cloud handles retraining, fleet analytics, simulation, and policy updates. This is usually the most practical model, but it comes with integration complexity. Teams must manage software versioning, safety certification, fallback behavior, cybersecurity, and data pipelines that connect the robot to the fleet without making the fleet dependent on a live network connection.

Where the stack breaks down

The hardest problems are often the least glamorous.

Latency and determinism are major failure points. In robotics, average response time matters less than worst-case response time. A model that is fast 99 percent of the time but occasionally stalls can be dangerous. That is why industrial systems still rely heavily on deterministic controllers and safety interlocks, even when AI is involved upstream.

Data quality is another constraint. Robotics teams do not just need more data; they need the right kind of data. They need labeled edge cases, failed grasps, rare obstacles, different lighting conditions, wear-and-tear scenarios, and long-tail exceptions. Unlike internet software, robots operate in environments that drift physically: floors change, grippers age, items vary, and sensors degrade. Models trained on clean demo data often struggle in production.

Power and thermal budgets are easy to underestimate. A warehouse robot with a GPU module can become too hot, too expensive, or too power-hungry to run an entire shift. In mobile and humanoid platforms, compute competes directly with battery life. If AI consumes too much power, the robot spends more time charging than working.

Safety and certification slow everything down for good reason. A robot that can injure a person, damage inventory, or interfere with critical infrastructure must fail safely, not merely perform well in benchmarks. This pushes design teams toward redundancy, constrained behaviors, emergency stops, geofencing, and layered verification. The more autonomy a system has, the more carefully its failure modes must be engineered.

Why some robots benefit more than others

Not every robotics category gains equally from AI. The biggest wins are in semi-structured environments where the robot must deal with variation but not with complete chaos.

Warehousing and logistics are prime candidates. Items vary, aisles change, and workflows are repetitive enough to support learning. AI improves picking, sorting, navigation, and exception handling. The economics work because the same system can be deployed at scale across many facilities.

Manufacturing is more mixed. Structured lines still favor traditional automation because fixed tasks reward speed and reliability. AI is more valuable for quality inspection, adaptive handling, and flexible cells where product mix changes frequently.

Healthcare and labs offer clear use cases but impose stricter reliability, privacy, and regulatory demands. The systems need excellent perception and conservative behavior. The technology may be ready, but the deployment path is slower and more controlled.

Field robotics in agriculture, construction, mining, and energy infrastructure often has the highest upside and the highest complexity. Terrain is irregular, conditions are harsh, and maintenance is difficult. AI helps, but ruggedization, sensor cleanup, and uptime engineering often matter more than model architecture.

The real competitive moat is systems engineering

It is tempting to think the winner will be the company with the best model. That is not how robotics usually works. The durable advantage belongs to the team that can integrate sensors, compute, mechanical design, software, safety, deployment, and service into a system that keeps working after the demo ends.

In that sense, robotics is becoming less like a pure hardware business and less like a pure AI business. It is becoming a full-stack infrastructure business. Success depends on getting the compute in the right place, keeping the control loops stable, managing energy and heat, and building enough operational discipline that the machine remains useful when conditions change.

That is the practical lesson of AI and robotics working together. AI makes robots more capable. Robotics makes AI accountable to reality. The frontier is not a sci-fi blend of the two. It is the infrastructure that decides whether intelligence can survive contact with the physical world.

Image: 2019 U2 Q6 Part D.png | Own work | License: CC BY 4.0 | Source: Wikimedia | https://commons.wikimedia.org/wiki/File:2019_U2_Q6_Part_D.png